Java

Java

javaTutorial

javaTutorial

Introduction to Java Basics to Practical Applications: Practical Analysis of Big Data

Introduction to Java Basics to Practical Applications: Practical Analysis of Big Data

Introduction to Java Basics to Practical Applications: Practical Analysis of Big Data

This tutorial will help you master big data analysis skills from Java basics to practical applications. Includes Java basics (variables, control flow, classes, etc.), big data tools (Hadoop ecosystem, Spark, Hive), and a practical case: getting flight data from OpenFlights. Use Hadoop to read and process data and analyze the most frequent airports for flight destinations. Use Spark to drill down and find the latest flight to your destination. Use Hive to interactively analyze data and count the number of flights at each airport.

Java Basics to Practical Application: Big Data Practical Analysis

Introduction

With the advent of the big data era, mastering big data analysis skills has become crucial. This tutorial will lead you from getting started with Java basics to using Java for practical big data analysis.

Java Basics

- Variables, data types and operators

- Control flow (if-else, for, while)

- Classes, objects and methods

- Arrays and collections (lists, maps, collections)

Big data analysis tools

- Hadoop Ecosystem (Hadoop, MapReduce, HDFS)

- Spark

- Hive

Practical Case: Using Java to Analyze Flight Data

Step 1: Get the data

Download flight data from the OpenFlights dataset.

Step 2: Read and write data using Hadoop

Read and process data using Hadoop and MapReduce.

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

public class FlightStats {

public static void main(String[] args) throws Exception {

Configuration conf = new Configuration();

Job job = Job.getInstance(conf, "Flight Stats");

job.setJarByClass(FlightStats.class);

job.setMapperClass(FlightStatsMapper.class);

job.setReducerClass(FlightStatsReducer.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

FileInputFormat.addInputPath(job, new Path(args[0]));

FileOutputFormat.setOutputPath(job, new Path(args[1]));

job.waitForCompletion(true);

}

public static class FlightStatsMapper extends Mapper<Object, Text, Text, IntWritable> {

@Override

public void map(Object key, Text value, Context context) throws IOException, InterruptedException {

String[] line = value.toString().split(",");

context.write(new Text(line[1]), new IntWritable(1));

}

}

public static class FlightStatsReducer extends Reducer<Text, IntWritable, Text, IntWritable> {

@Override

public void reduce(Text key, Iterable<IntWritable> values, Context context) throws IOException, InterruptedException {

int sum = 0;

for (IntWritable value : values) {

sum += value.get();

}

context.write(key, new IntWritable(sum));

}

}

}Step 3: Use Spark for further analysis

Use Spark DataFrame and SQL queries to analyze the data.

import org.apache.spark.sql.Dataset;

import org.apache.spark.sql.Row;

import org.apache.spark.sql.SparkSession;

public class FlightStatsSpark {

public static void main(String[] args) {

SparkSession spark = SparkSession.builder().appName("Flight Stats Spark").getOrCreate();

Dataset<Row> flights = spark.read().csv("hdfs:///path/to/flights.csv");

flights.createOrReplaceTempView("flights");

Dataset<Row> top10Airports = spark.sql("SELECT origin, COUNT(*) AS count FROM flights GROUP BY origin ORDER BY count DESC LIMIT 10");

top10Airports.show(10);

}

}Step 4: Use Hive interactive query

Use Hive interactive query to analyze data.

CREATE TABLE flights (origin STRING, dest STRING, carrier STRING, dep_date STRING, dep_time STRING, arr_date STRING, arr_time STRING) ROW FORMAT DELIMITED FIELDS TERMINATED BY ',' STORED AS TEXTFILE; LOAD DATA INPATH 'hdfs:///path/to/flights.csv' OVERWRITE INTO TABLE flights; SELECT origin, COUNT(*) AS count FROM flights GROUP BY origin ORDER BY count DESC LIMIT 10;

Conclusion

Through this tutorial, you have mastered the basics of Java and the skills to use Java for practical big data analysis. By understanding Hadoop, Spark, and Hive, you can efficiently analyze large data sets and extract valuable insights from them.

The above is the detailed content of Introduction to Java Basics to Practical Applications: Practical Analysis of Big Data. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

Java Spring Interview Questions

Aug 30, 2024 pm 04:29 PM

Java Spring Interview Questions

Aug 30, 2024 pm 04:29 PM

In this article, we have kept the most asked Java Spring Interview Questions with their detailed answers. So that you can crack the interview.

Break or return from Java 8 stream forEach?

Feb 07, 2025 pm 12:09 PM

Break or return from Java 8 stream forEach?

Feb 07, 2025 pm 12:09 PM

Java 8 introduces the Stream API, providing a powerful and expressive way to process data collections. However, a common question when using Stream is: How to break or return from a forEach operation? Traditional loops allow for early interruption or return, but Stream's forEach method does not directly support this method. This article will explain the reasons and explore alternative methods for implementing premature termination in Stream processing systems. Further reading: Java Stream API improvements Understand Stream forEach The forEach method is a terminal operation that performs one operation on each element in the Stream. Its design intention is

Java Made Simple: A Beginner's Guide to Programming Power

Oct 11, 2024 pm 06:30 PM

Java Made Simple: A Beginner's Guide to Programming Power

Oct 11, 2024 pm 06:30 PM

Java Made Simple: A Beginner's Guide to Programming Power Introduction Java is a powerful programming language used in everything from mobile applications to enterprise-level systems. For beginners, Java's syntax is simple and easy to understand, making it an ideal choice for learning programming. Basic Syntax Java uses a class-based object-oriented programming paradigm. Classes are templates that organize related data and behavior together. Here is a simple Java class example: publicclassPerson{privateStringname;privateintage;

Create the Future: Java Programming for Absolute Beginners

Oct 13, 2024 pm 01:32 PM

Create the Future: Java Programming for Absolute Beginners

Oct 13, 2024 pm 01:32 PM

Java is a popular programming language that can be learned by both beginners and experienced developers. This tutorial starts with basic concepts and progresses through advanced topics. After installing the Java Development Kit, you can practice programming by creating a simple "Hello, World!" program. After you understand the code, use the command prompt to compile and run the program, and "Hello, World!" will be output on the console. Learning Java starts your programming journey, and as your mastery deepens, you can create more complex applications.

Java Program to Find the Volume of Capsule

Feb 07, 2025 am 11:37 AM

Java Program to Find the Volume of Capsule

Feb 07, 2025 am 11:37 AM

Capsules are three-dimensional geometric figures, composed of a cylinder and a hemisphere at both ends. The volume of the capsule can be calculated by adding the volume of the cylinder and the volume of the hemisphere at both ends. This tutorial will discuss how to calculate the volume of a given capsule in Java using different methods. Capsule volume formula The formula for capsule volume is as follows: Capsule volume = Cylindrical volume Volume Two hemisphere volume in, r: The radius of the hemisphere. h: The height of the cylinder (excluding the hemisphere). Example 1 enter Radius = 5 units Height = 10 units Output Volume = 1570.8 cubic units explain Calculate volume using formula: Volume = π × r2 × h (4

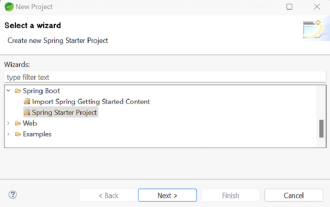

How to Run Your First Spring Boot Application in Spring Tool Suite?

Feb 07, 2025 pm 12:11 PM

How to Run Your First Spring Boot Application in Spring Tool Suite?

Feb 07, 2025 pm 12:11 PM

Spring Boot simplifies the creation of robust, scalable, and production-ready Java applications, revolutionizing Java development. Its "convention over configuration" approach, inherent to the Spring ecosystem, minimizes manual setup, allo

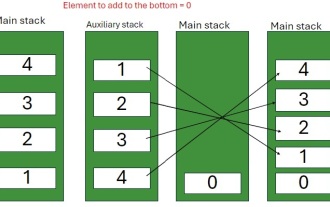

Java Program to insert an element at the Bottom of a Stack

Feb 07, 2025 am 11:59 AM

Java Program to insert an element at the Bottom of a Stack

Feb 07, 2025 am 11:59 AM

A stack is a data structure that follows the LIFO (Last In, First Out) principle. In other words, The last element we add to a stack is the first one to be removed. When we add (or push) elements to a stack, they are placed on top; i.e. above all the

Comparing Two ArrayList In Java

Feb 07, 2025 pm 12:03 PM

Comparing Two ArrayList In Java

Feb 07, 2025 pm 12:03 PM

This guide explores several Java methods for comparing two ArrayLists. Successful comparison requires both lists to have the same size and contain identical elements. Methods for Comparing ArrayLists in Java Several approaches exist for comparing Ar