Photoshop 非常漂亮的3D小屋

本教程可能是翻译国外的教程。中文部分翻译的不是很好。很多过程的意思都没有翻译正确。不过原作者的教写的非常详细,我们只要参照过程图来做,还是可以做出来的。

最终效果

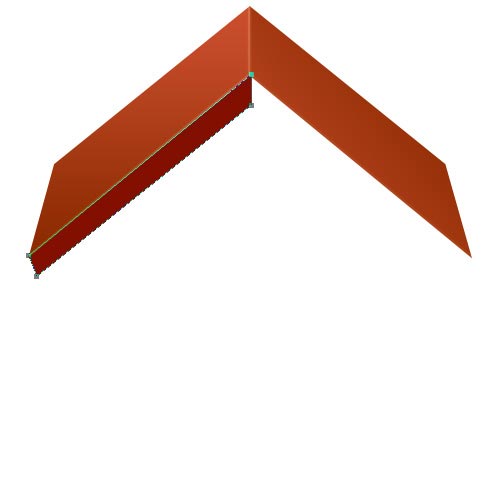

1、首先,创建500px * 500ps白色背景的文件。选择钢笔工具(P)和作出如下所示的一个形状。

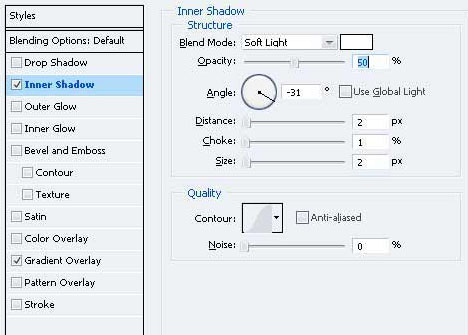

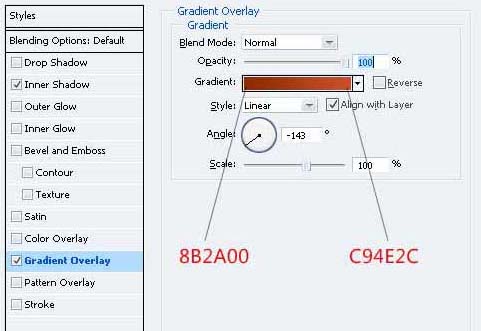

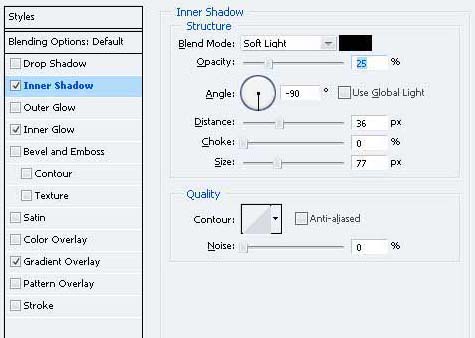

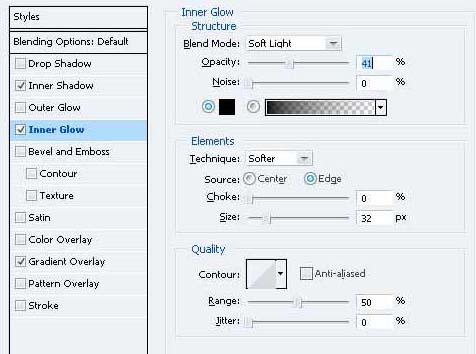

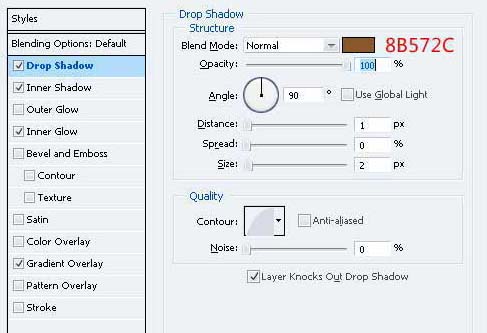

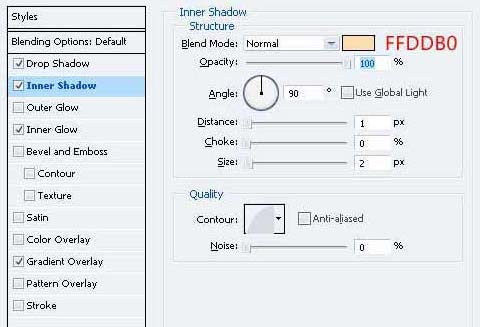

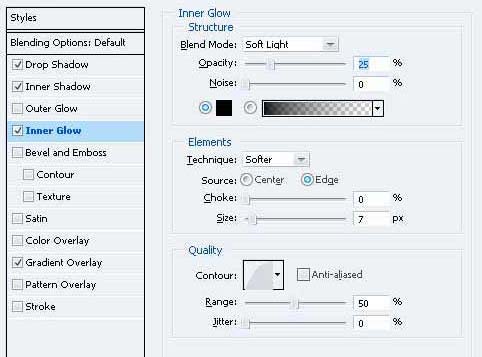

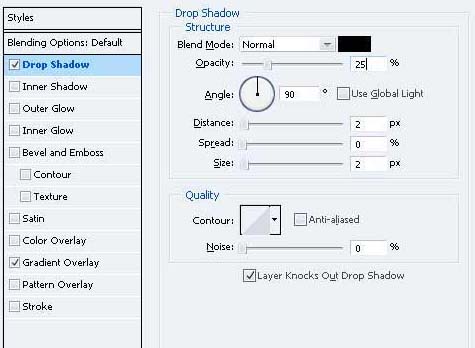

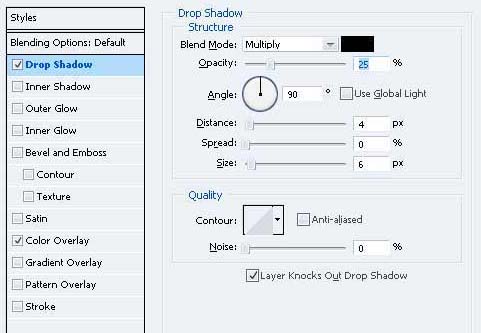

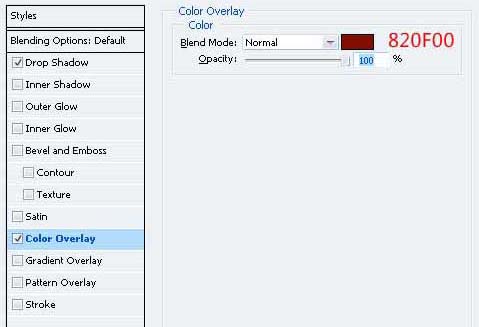

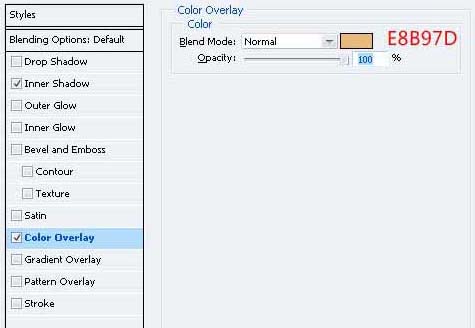

2、双击图层调出图层样式,参数设置如下图。

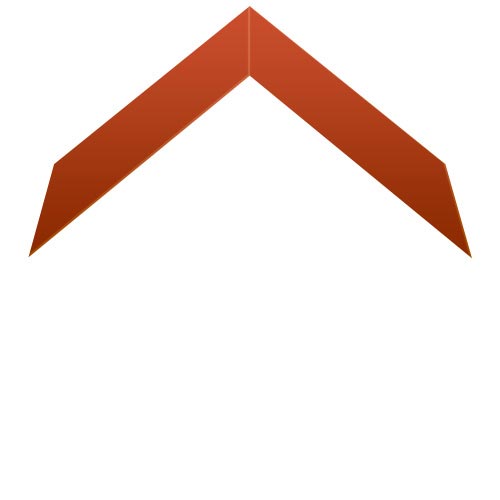

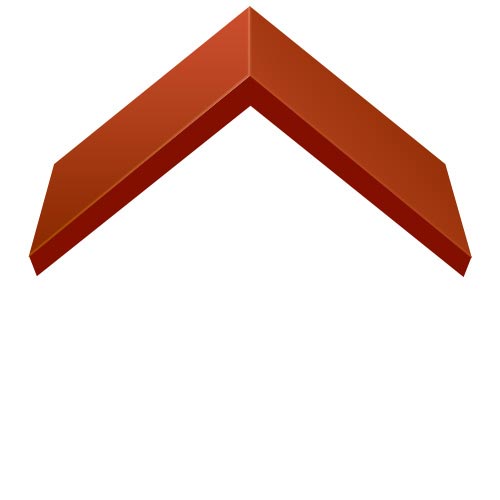

3、把做好的图形复制一层,执行:编辑“>变换”水平翻转,把两个部分对接起来。

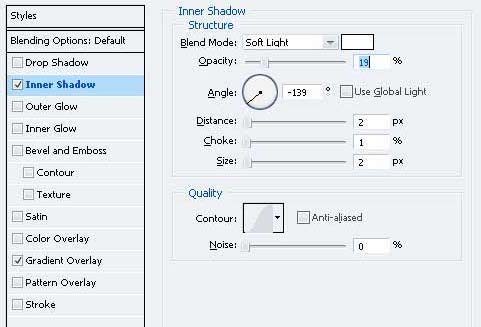

4、由于类似的颜色,形状给这两个单位看看屋顶。 有一个需要修正它。 打开重复的层图层样式,并应用以下更改。

5、设置前景色“830F00” 绘制一个这样的形状如下所示的钢笔工具(规划)。 命名为“屋顶左”。

6、把刚才做好的图形复制一层,命名为“屋顶的右”。进入“编辑>变换”水平翻转和移动向右重复的形状,让你得到下图所示的效果。

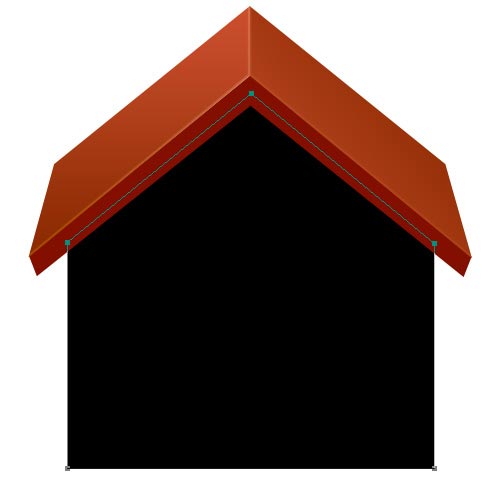

7、在背景图层上面新建一个图层,用钢笔工具勾出下图所示的路径填充黑色,将其命名为“身体。”

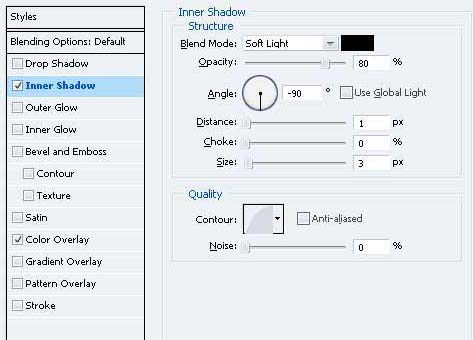

8、双击层并给予这些图层样式。

9、您需要把房子的屋顶加上影子。为此,命令的“屋顶的左侧”层上的“屋顶的右侧”层总结两个层次的选择,然后命令+按住Shift键单击。移动选择下来,在新的图层填充颜色“5F5343的选择。”

10、转到滤镜“>模糊”高斯模糊,进入10px然后单击确定。

#p#

11、您可能注意到,经过过滤器已被应用,阴影是在屋外的身体,这看起来不正确流向。要修正它的“身体”层,Ctrl键单击,按Command + Shift +我颠倒选择。与“影子”层选择,按Delete键。

12、现在,您需要添加一个房子的突出部分, -这就是门。选择矩形选框工具(M)和在新的图层填充黑色选择。

13、给门下面的图层样式。

14、现在,我们需要添加一些细节的大门。选择圆角矩形工具(按Shift + U)和绘制的3px半径黑色矩形。

15、去它的混合选项并应用这些设置。

16、重复的形状和移动下来,让你有这样的事情。

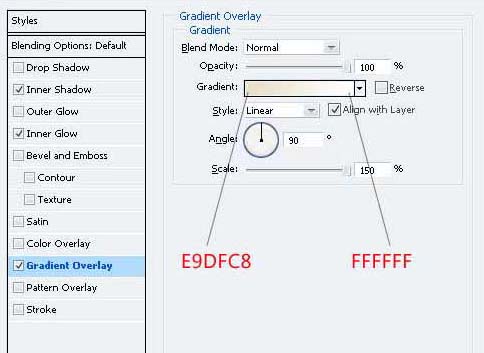

17、现在可以添加到门。椭圆工具使用(ü)就门口一个小圆圈。

18、给圆加阴影和径向渐变。

19、您可以添加一门上面的板。使用钢笔工具(规划)作出如下所示的一个形状。

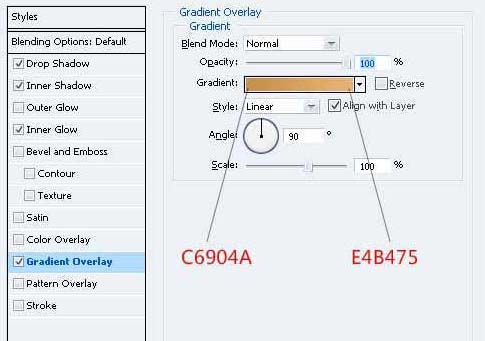

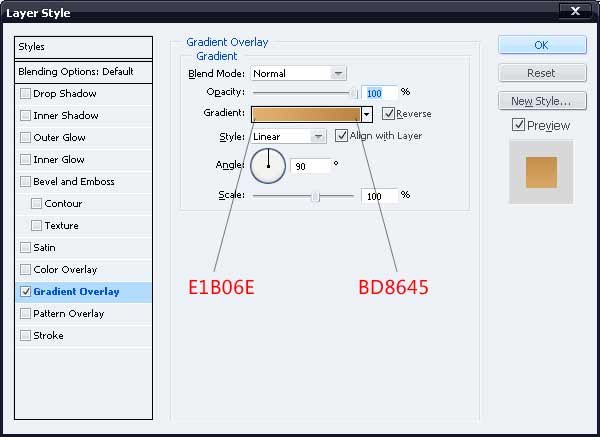

20、去它的混合选项,并给它一个作为屋顶类似的语气渐变叠加。

#p#

21、用钢笔工具(规划),再作类似下面这一形状。

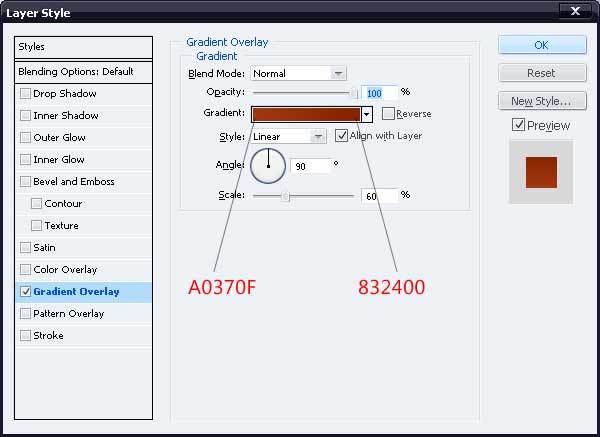

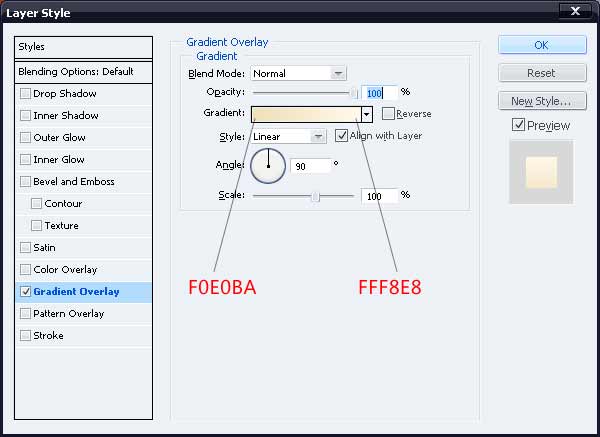

22、给下面的形状图层样式。

23、创建一个新层,填补它与黑色的选择。 确保这层下面的“门”在图层面板层上。

24、给黑条对下列颜色渐变叠加。

25、作为进一步的细节,您可以添加一个门,一步房子。 为喜欢与钢笔工具(规划)1以下的形状。

26、加上渐变叠加。

27、为了让大门有进一步的3D界面,添加一些厚度它。 设置前景色为“A26431”,并以此为如下所示的一个形状。

28、现在是时候制作窗户的时候。通过填写开始做一个新层上有一个黑色的选择等。

29、使用矩形选框工具(米),填充白色显示一个新的层中进行选择。

30、还要向窗户混合选项截面,并给予这些样式。

#p#

31、现在,你需要快门。 就像你所取得的大门,作出快门并在一个窗口的正面。 制作它的复制和移动到另一边,给予阴影的百叶窗,如果你想要的。

32、只要增加细节,添加一个平板,像你的窗口没有向门口。 唯一的区别是,你需要运用图层样式的大门一步的平板您为窗口决策。

33、创建一个图层组和它提出的所有层,构成了窗口。 复制层设置了两次并将它转换为60%,其原始大小。 放在门右侧的小窗口。

34、现在,您可以添加一个烟囱的房子。 创建一个新层,并就此事,填充黑色选择。

35、给予这些色彩的渐变叠加。

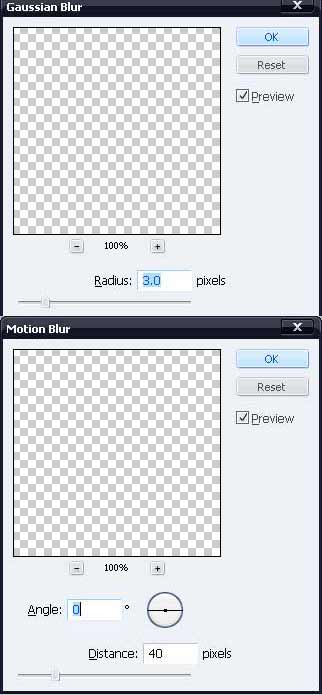

36、请有类似下面一让烟囱看三维形状。

37、再作其它的烟囱颜色的形状。

38、给这些图形加上渐变色。

39、设置前景色“D6C08D”,并作出这样的形状。

#p#

40、现在添加阴影,房子的图标基地。 创建一个新层,然后使用矩形选框工具(米)来填充黑色选择。

41、执行:滤镜“>模糊”高斯模糊,然后滤镜“>模糊”,参数设置如下图。

42、制作一个类似的阴影门一步。您可以设置从80-90%或20-30%的阴影不透明度。我加了一些草,完成最终效果。

最终效果。

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

Why is Gaussian Splatting so popular in autonomous driving that NeRF is starting to be abandoned?

Jan 17, 2024 pm 02:57 PM

Why is Gaussian Splatting so popular in autonomous driving that NeRF is starting to be abandoned?

Jan 17, 2024 pm 02:57 PM

Written above & the author’s personal understanding Three-dimensional Gaussiansplatting (3DGS) is a transformative technology that has emerged in the fields of explicit radiation fields and computer graphics in recent years. This innovative method is characterized by the use of millions of 3D Gaussians, which is very different from the neural radiation field (NeRF) method, which mainly uses an implicit coordinate-based model to map spatial coordinates to pixel values. With its explicit scene representation and differentiable rendering algorithms, 3DGS not only guarantees real-time rendering capabilities, but also introduces an unprecedented level of control and scene editing. This positions 3DGS as a potential game-changer for next-generation 3D reconstruction and representation. To this end, we provide a systematic overview of the latest developments and concerns in the field of 3DGS for the first time.

Learn about 3D Fluent emojis in Microsoft Teams

Apr 24, 2023 pm 10:28 PM

Learn about 3D Fluent emojis in Microsoft Teams

Apr 24, 2023 pm 10:28 PM

You must remember, especially if you are a Teams user, that Microsoft added a new batch of 3DFluent emojis to its work-focused video conferencing app. After Microsoft announced 3D emojis for Teams and Windows last year, the process has actually seen more than 1,800 existing emojis updated for the platform. This big idea and the launch of the 3DFluent emoji update for Teams was first promoted via an official blog post. Latest Teams update brings FluentEmojis to the app Microsoft says the updated 1,800 emojis will be available to us every day

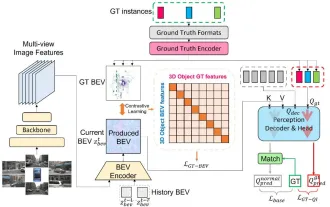

CLIP-BEVFormer: Explicitly supervise the BEVFormer structure to improve long-tail detection performance

Mar 26, 2024 pm 12:41 PM

CLIP-BEVFormer: Explicitly supervise the BEVFormer structure to improve long-tail detection performance

Mar 26, 2024 pm 12:41 PM

Written above & the author’s personal understanding: At present, in the entire autonomous driving system, the perception module plays a vital role. The autonomous vehicle driving on the road can only obtain accurate perception results through the perception module. The downstream regulation and control module in the autonomous driving system makes timely and correct judgments and behavioral decisions. Currently, cars with autonomous driving functions are usually equipped with a variety of data information sensors including surround-view camera sensors, lidar sensors, and millimeter-wave radar sensors to collect information in different modalities to achieve accurate perception tasks. The BEV perception algorithm based on pure vision is favored by the industry because of its low hardware cost and easy deployment, and its output results can be easily applied to various downstream tasks.

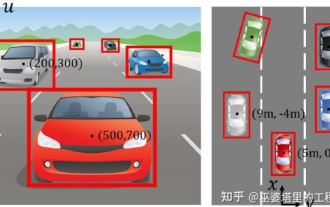

Choose camera or lidar? A recent review on achieving robust 3D object detection

Jan 26, 2024 am 11:18 AM

Choose camera or lidar? A recent review on achieving robust 3D object detection

Jan 26, 2024 am 11:18 AM

0.Written in front&& Personal understanding that autonomous driving systems rely on advanced perception, decision-making and control technologies, by using various sensors (such as cameras, lidar, radar, etc.) to perceive the surrounding environment, and using algorithms and models for real-time analysis and decision-making. This enables vehicles to recognize road signs, detect and track other vehicles, predict pedestrian behavior, etc., thereby safely operating and adapting to complex traffic environments. This technology is currently attracting widespread attention and is considered an important development area in the future of transportation. one. But what makes autonomous driving difficult is figuring out how to make the car understand what's going on around it. This requires that the three-dimensional object detection algorithm in the autonomous driving system can accurately perceive and describe objects in the surrounding environment, including their locations,

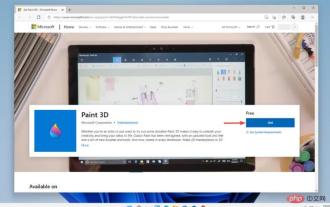

Paint 3D in Windows 11: Download, Installation, and Usage Guide

Apr 26, 2023 am 11:28 AM

Paint 3D in Windows 11: Download, Installation, and Usage Guide

Apr 26, 2023 am 11:28 AM

When the gossip started spreading that the new Windows 11 was in development, every Microsoft user was curious about how the new operating system would look like and what it would bring. After speculation, Windows 11 is here. The operating system comes with new design and functional changes. In addition to some additions, it comes with feature deprecations and removals. One of the features that doesn't exist in Windows 11 is Paint3D. While it still offers classic Paint, which is good for drawers, doodlers, and doodlers, it abandons Paint3D, which offers extra features ideal for 3D creators. If you are looking for some extra features, we recommend Autodesk Maya as the best 3D design software. like

Get a virtual 3D wife in 30 seconds with a single card! Text to 3D generates a high-precision digital human with clear pore details, seamlessly connecting with Maya, Unity and other production tools

May 23, 2023 pm 02:34 PM

Get a virtual 3D wife in 30 seconds with a single card! Text to 3D generates a high-precision digital human with clear pore details, seamlessly connecting with Maya, Unity and other production tools

May 23, 2023 pm 02:34 PM

ChatGPT has injected a dose of chicken blood into the AI industry, and everything that was once unthinkable has become basic practice today. Text-to-3D, which continues to advance, is regarded as the next hotspot in the AIGC field after Diffusion (images) and GPT (text), and has received unprecedented attention. No, a product called ChatAvatar has been put into low-key public beta, quickly garnering over 700,000 views and attention, and was featured on Spacesoftheweek. △ChatAvatar will also support Imageto3D technology that generates 3D stylized characters from AI-generated single-perspective/multi-perspective original paintings. The 3D model generated by the current beta version has received widespread attention.

An in-depth interpretation of the 3D visual perception algorithm for autonomous driving

Jun 02, 2023 pm 03:42 PM

An in-depth interpretation of the 3D visual perception algorithm for autonomous driving

Jun 02, 2023 pm 03:42 PM

For autonomous driving applications, it is ultimately necessary to perceive 3D scenes. The reason is simple. A vehicle cannot drive based on the perception results obtained from an image. Even a human driver cannot drive based on an image. Because the distance of objects and the depth information of the scene cannot be reflected in the 2D perception results, this information is the key for the autonomous driving system to make correct judgments on the surrounding environment. Generally speaking, the visual sensors (such as cameras) of autonomous vehicles are installed above the vehicle body or on the rearview mirror inside the vehicle. No matter where it is, what the camera gets is the projection of the real world in the perspective view (PerspectiveView) (world coordinate system to image coordinate system). This view is very similar to the human visual system,

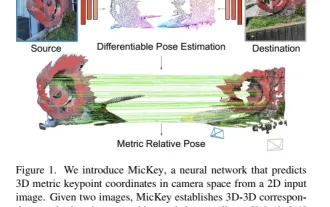

The latest from Oxford University! Mickey: 2D image matching in 3D SOTA! (CVPR\'24)

Apr 23, 2024 pm 01:20 PM

The latest from Oxford University! Mickey: 2D image matching in 3D SOTA! (CVPR\'24)

Apr 23, 2024 pm 01:20 PM

Project link written in front: https://nianticlabs.github.io/mickey/ Given two pictures, the camera pose between them can be estimated by establishing the correspondence between the pictures. Typically, these correspondences are 2D to 2D, and our estimated poses are scale-indeterminate. Some applications, such as instant augmented reality anytime, anywhere, require pose estimation of scale metrics, so they rely on external depth estimators to recover scale. This paper proposes MicKey, a keypoint matching process capable of predicting metric correspondences in 3D camera space. By learning 3D coordinate matching across images, we are able to infer metric relative