Web Front-end

Web Front-end

JS Tutorial

JS Tutorial

Set cookie expiration to automatically update and automatically obtain

Set cookie expiration to automatically update and automatically obtain

Set cookie expiration to automatically update and automatically obtain

This time I will introduce to you the settings for automatic update and automatic acquisition of cookie expiration. What are the precautions for setting automatic update and automatic acquisition of cookie expiration? The following is a practical case, let's take a look.

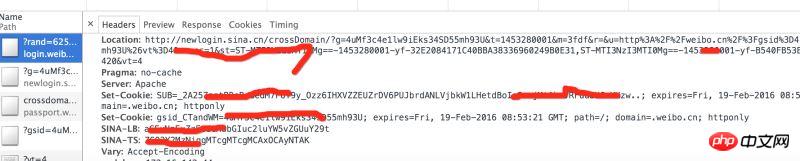

This article implements automatic acquisition of cookies and automatic update of cookies when they expire. A lot of information on social networking sites requires logging in to get it. Take Weibo as an example. Without logging in, you can only see the top ten Weibo posts of big Vs. To stay logged in, cookies are required. Take logging in to www.weibo.cn as an example: Enter in chrome: http://login.weibo.cn/login/

Implementation steps:

1, use selenium to automatically log in to obtain cookies, save them to a file; 2, read cookie, compare the validity period of the cookie, and if it expires, perform step 1 again; 3, when requesting other web pages, fill in the cookie to maintain the login status. 1, Get cookies onlineUse selenium + PhantomJS to simulate browser login and obtain cookies;There are usually multiple cookies, and the cookies are stored one by one in .weibo suffix file.def get_cookie_from_network():

from selenium import webdriver

url_login = 'http://login.weibo.cn/login/'

driver = webdriver.PhantomJS()

driver.get(url_login)

driver.find_element_by_xpath('//input[@type="text"]').send_keys('your_weibo_accout') # 改成你的微博账号

driver.find_element_by_xpath('//input[@type="password"]').send_keys('your_weibo_password') # 改成你的微博密码

driver.find_element_by_xpath('//input[@type="submit"]').click() # 点击登录

# 获得 cookie信息

cookie_list = driver.get_cookies()

print cookie_list

cookie_dict = {}

for cookie in cookie_list:

#写入文件

f = open(cookie['name']+'.weibo','w')

pickle.dump(cookie, f)

f.close()

if cookie.has_key('name') and cookie.has_key('value'):

cookie_dict[cookie['name']] = cookie['value']

return cookie_dictdef get_cookie_from_cache():

cookie_dict = {}

for parent, dirnames, filenames in os.walk('./'):

for filename in filenames:

if filename.endswith('.weibo'):

print filename

with open(self.dir_temp + filename, 'r') as f:

d = pickle.load(f)

if d.has_key('name') and d.has_key('value') and d.has_key('expiry'):

expiry_date = int(d['expiry'])

if expiry_date > (int)(time.time()):

cookie_dict[d['name']] = d['value']

else:

return {}

return cookie_dictdef get_cookie(): cookie_dict = get_cookie_from_cache() if not cookie_dict: cookie_dict = get_cookie_from_network() return cookie_dict

def get_weibo_list(self, user_id):

import requests

from bs4 import BeautifulSoup as bs

cookdic = get_cookie()

url = 'http://weibo.cn/stocknews88'

headers = {'User-Agent': 'Mozilla/5.0 (Windows NT 6.1) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/31.0.1650.57 Safari/537.36'}

timeout = 5

r = requests.get(url, headers=headers, cookies=cookdic,timeout=timeout)

soup = bs(r.text, 'lxml')

...

# 用BeautifulSoup 解析网页

...react native uses fetch to upload images

How to compress the files packaged by webpack to become smaller

Use import and require to package JS

The above is the detailed content of Set cookie expiration to automatically update and automatically obtain. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1657

1657

14

14

1415

1415

52

52

1309

1309

25

25

1257

1257

29

29

1230

1230

24

24

Where are cookies stored?

Dec 20, 2023 pm 03:07 PM

Where are cookies stored?

Dec 20, 2023 pm 03:07 PM

Cookies are usually stored in the cookie folder of the browser. Cookie files in the browser are usually stored in binary or SQLite format. If you open the cookie file directly, you may see some garbled or unreadable content, so it is best to use Use the cookie management interface provided by your browser to view and manage cookies.

Where are the cookies on your computer?

Dec 22, 2023 pm 03:46 PM

Where are the cookies on your computer?

Dec 22, 2023 pm 03:46 PM

Cookies on your computer are stored in specific locations on your browser, depending on the browser and operating system used: 1. Google Chrome, stored in C:\Users\YourUsername\AppData\Local\Google\Chrome\User Data\Default \Cookies etc.

Where are the mobile cookies?

Dec 22, 2023 pm 03:40 PM

Where are the mobile cookies?

Dec 22, 2023 pm 03:40 PM

Cookies on the mobile phone are stored in the browser application of the mobile device: 1. On iOS devices, Cookies are stored in Settings -> Safari -> Advanced -> Website Data of the Safari browser; 2. On Android devices, Cookies Stored in Settings -> Site settings -> Cookies of Chrome browser, etc.

How cookies work

Sep 20, 2023 pm 05:57 PM

How cookies work

Sep 20, 2023 pm 05:57 PM

The working principle of cookies involves the server sending cookies, the browser storing cookies, and the browser processing and storing cookies. Detailed introduction: 1. The server sends a cookie, and the server sends an HTTP response header containing the cookie to the browser. This cookie contains some information, such as the user's identity authentication, preferences, or shopping cart contents. After the browser receives this cookie, it will be stored on the user's computer; 2. The browser stores cookies, etc.

What are the dangers of cookie leakage?

Sep 20, 2023 pm 05:53 PM

What are the dangers of cookie leakage?

Sep 20, 2023 pm 05:53 PM

The dangers of cookie leakage include theft of personal identity information, tracking of personal online behavior, and account theft. Detailed introduction: 1. Personal identity information is stolen, such as name, email address, phone number, etc. This information may be used by criminals to carry out identity theft, fraud and other illegal activities; 2. Personal online behavior is tracked and analyzed through cookies With the data in the account, criminals can learn about the user's browsing history, shopping preferences, hobbies, etc.; 3. The account is stolen, bypassing login verification, directly accessing the user's account, etc.

Does clearing cookies have any impact?

Sep 20, 2023 pm 06:01 PM

Does clearing cookies have any impact?

Sep 20, 2023 pm 06:01 PM

The effects of clearing cookies include resetting personalization settings and preferences, affecting ad experience, and destroying login status and password remembering functions. Detailed introduction: 1. Reset personalized settings and preferences. If cookies are cleared, the shopping cart will be reset to empty and products need to be re-added. Clearing cookies will also cause the login status on social media platforms to be lost, requiring re-adding. Enter your username and password; 2. It affects the advertising experience. If cookies are cleared, the website will not be able to understand our interests and preferences, and will display irrelevant ads, etc.

Detailed explanation of where browser cookies are stored

Jan 19, 2024 am 09:15 AM

Detailed explanation of where browser cookies are stored

Jan 19, 2024 am 09:15 AM

With the popularity of the Internet, we use browsers to surf the Internet have become a way of life. In the daily use of browsers, we often encounter situations where we need to enter account passwords, such as online shopping, social networking, emails, etc. This information needs to be recorded by the browser so that it does not need to be entered again the next time you visit. This is when cookies come in handy. What are cookies? Cookie refers to a small data file sent by the server to the user's browser and stored locally. It contains user behavior of some websites.

What should I do if win11 cannot use ie11 browser? (win11 cannot use IE browser)

Feb 10, 2024 am 10:30 AM

What should I do if win11 cannot use ie11 browser? (win11 cannot use IE browser)

Feb 10, 2024 am 10:30 AM

More and more users are starting to upgrade the win11 system. Since each user has different usage habits, many users are still using the ie11 browser. So what should I do if the win11 system cannot use the ie browser? Does windows11 still support ie11? Let’s take a look at the solution. Solution to the problem that win11 cannot use the ie11 browser 1. First, right-click the start menu and select "Command Prompt (Administrator)" to open it. 2. After opening, directly enter "Netshwinsockreset" and press Enter to confirm. 3. After confirmation, enter "netshadvfirewallreset&rdqu