Apabila pembangun Java bertanya kepada saya cara untuk menggunakan Spring Boot API mereka pada AWS ECS, saya melihatnya sebagai peluang terbaik untuk menyelami kemas kini terkini mengenai projek CDKTF (Cloud Development Kit for Terraform).

Dalam artikel sebelumnya, saya memperkenalkan CDKTF, rangka kerja yang membolehkan anda menulis Infrastruktur sebagai Kod (IaC) menggunakan bahasa pengaturcaraan tujuan umum seperti Python. Sejak itu, CDKTF telah mencapai keluaran GA yang pertama, menjadikannya masa yang sesuai untuk melawatnya semula. Dalam artikel ini, kami akan meneruskan penggunaan Spring Boot API pada AWS ECS menggunakan CDKTF.

Cari kod artikel ini pada repo github saya.

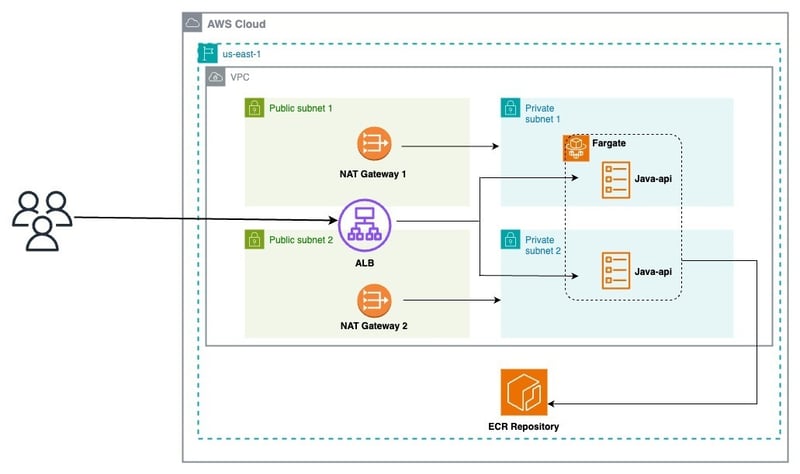

Sebelum terjun ke dalam pelaksanaan, mari semak seni bina yang kami sasarkan untuk digunakan:

Daripada rajah ini, kita boleh memecahkan seni bina kepada 03 lapisan:

API Java yang kami gunakan tersedia di GitHub.

Ia mentakrifkan API REST ringkas dengan tiga titik akhir:

Mari tambah Fail Docker:

FROM maven:3.9-amazoncorretto-21 AS builder WORKDIR /app COPY pom.xml . COPY src src RUN mvn clean package # amazon java distribution FROM amazoncorretto:21-alpine COPY --from=builder /app/target/*.jar /app/java-api.jar EXPOSE 8080 ENTRYPOINT ["java","-jar","/app/java-api.jar"]

Aplikasi kami sedia untuk digunakan!

AWS CDKTF membolehkan anda mentakrif dan mengurus sumber AWS menggunakan Python.

- [**python (3.13)**](https://www.python.org/) - [**pipenv**](https://pipenv.pypa.io/en/latest/) - [**npm**](https://nodejs.org/en/)

Pastikan anda mempunyai alatan yang diperlukan dengan memasang CDKTF dan kebergantungannya:

$ npm install -g cdktf-cli@latest

Ini memasang cdktf CLI yang membolehkan memutarkan projek baharu untuk pelbagai bahasa.

Kami boleh merancah projek ular sawa baharu dengan menjalankan:

FROM maven:3.9-amazoncorretto-21 AS builder WORKDIR /app COPY pom.xml . COPY src src RUN mvn clean package # amazon java distribution FROM amazoncorretto:21-alpine COPY --from=builder /app/target/*.jar /app/java-api.jar EXPOSE 8080 ENTRYPOINT ["java","-jar","/app/java-api.jar"]

Terdapat banyak fail yang dibuat secara lalai dan semua kebergantungan dipasang.

Di bawah ialah main.pyfile awal:

- [**python (3.13)**](https://www.python.org/) - [**pipenv**](https://pipenv.pypa.io/en/latest/) - [**npm**](https://nodejs.org/en/)

Sebuah tindanan mewakili sekumpulan sumber infrastruktur yang CDK untuk Terraform (CDKTF) menyusun ke dalam konfigurasi Terraform yang berbeza. Tindanan membolehkan pengurusan keadaan berasingan untuk persekitaran yang berbeza dalam aplikasi. Untuk berkongsi sumber merentas lapisan, kami akan menggunakan rujukan Silang-Timbunan.

Tambahkan fail network_stack.py pada projek anda

$ npm install -g cdktf-cli@latest

Tambah kod berikut untuk mencipta semua sumber rangkaian:

# init the project using aws provider $ mkdir samples-fargate $ cd samples-fargate && cdktf init --template=python --providers=aws

Kemudian, edit fail main.py:

#!/usr/bin/env python

from constructs import Construct

from cdktf import App, TerraformStack

class MyStack(TerraformStack):

def __init__(self, scope: Construct, id: str):

super().__init__(scope, id)

# define resources here

app = App()

MyStack(app, "aws-cdktf-samples-fargate")

app.synth()

Janakan fail konfigurasi terraform dengan menjalankan arahan berikut:

$ mkdir infra $ cd infra && touch network_stack.py

Letakkan tindanan rangkaian dengan ini:

from constructs import Construct

from cdktf import S3Backend, TerraformStack

from cdktf_cdktf_provider_aws.provider import AwsProvider

from cdktf_cdktf_provider_aws.vpc import Vpc

from cdktf_cdktf_provider_aws.subnet import Subnet

from cdktf_cdktf_provider_aws.eip import Eip

from cdktf_cdktf_provider_aws.nat_gateway import NatGateway

from cdktf_cdktf_provider_aws.route import Route

from cdktf_cdktf_provider_aws.route_table import RouteTable

from cdktf_cdktf_provider_aws.route_table_association import RouteTableAssociation

from cdktf_cdktf_provider_aws.internet_gateway import InternetGateway

class NetworkStack(TerraformStack):

def __init__(self, scope: Construct, ns: str, params: dict):

super().__init__(scope, ns)

self.region = params["region"]

# configure the AWS provider to use the us-east-1 region

AwsProvider(self, "AWS", region=self.region)

# use S3 as backend

S3Backend(

self,

bucket=params["backend_bucket"],

key=params["backend_key_prefix"] + "/network.tfstate",

region=self.region,

)

# create the vpc

vpc_demo = Vpc(self, "vpc-demo", cidr_block="192.168.0.0/16")

# create two public subnets

public_subnet1 = Subnet(

self,

"public-subnet-1",

vpc_id=vpc_demo.id,

availability_zone=f"{self.region}a",

cidr_block="192.168.1.0/24",

)

public_subnet2 = Subnet(

self,

"public-subnet-2",

vpc_id=vpc_demo.id,

availability_zone=f"{self.region}b",

cidr_block="192.168.2.0/24",

)

# create. the internet gateway

igw = InternetGateway(self, "igw", vpc_id=vpc_demo.id)

# create the public route table

public_rt = Route(

self,

"public-rt",

route_table_id=vpc_demo.main_route_table_id,

destination_cidr_block="0.0.0.0/0",

gateway_id=igw.id,

)

# create the private subnets

private_subnet1 = Subnet(

self,

"private-subnet-1",

vpc_id=vpc_demo.id,

availability_zone=f"{self.region}a",

cidr_block="192.168.10.0/24",

)

private_subnet2 = Subnet(

self,

"private-subnet-2",

vpc_id=vpc_demo.id,

availability_zone=f"{self.region}b",

cidr_block="192.168.20.0/24",

)

# create the Elastic IPs

eip1 = Eip(self, "nat-eip-1", depends_on=[igw])

eip2 = Eip(self, "nat-eip-2", depends_on=[igw])

# create the NAT Gateways

private_nat_gw1 = NatGateway(

self,

"private-nat-1",

subnet_id=public_subnet1.id,

allocation_id=eip1.id,

)

private_nat_gw2 = NatGateway(

self,

"private-nat-2",

subnet_id=public_subnet2.id,

allocation_id=eip2.id,

)

# create Route Tables

private_rt1 = RouteTable(self, "private-rt1", vpc_id=vpc_demo.id)

private_rt2 = RouteTable(self, "private-rt2", vpc_id=vpc_demo.id)

# add default routes to tables

Route(

self,

"private-rt1-default-route",

route_table_id=private_rt1.id,

destination_cidr_block="0.0.0.0/0",

nat_gateway_id=private_nat_gw1.id,

)

Route(

self,

"private-rt2-default-route",

route_table_id=private_rt2.id,

destination_cidr_block="0.0.0.0/0",

nat_gateway_id=private_nat_gw2.id,

)

# associate routes with subnets

RouteTableAssociation(

self,

"public-rt-association",

subnet_id=private_subnet2.id,

route_table_id=private_rt2.id,

)

RouteTableAssociation(

self,

"private-rt1-association",

subnet_id=private_subnet1.id,

route_table_id=private_rt1.id,

)

RouteTableAssociation(

self,

"private-rt2-association",

subnet_id=private_subnet2.id,

route_table_id=private_rt2.id,

)

# terraform outputs

self.vpc_id = vpc_demo.id

self.public_subnets = [public_subnet1.id, public_subnet2.id]

self.private_subnets = [private_subnet1.id, private_subnet2.id]

VPC kami sudah sedia seperti yang ditunjukkan dalam imej di bawah:

Tambah fail infra_stack.py pada projek anda

#!/usr/bin/env python

from constructs import Construct

from cdktf import App, TerraformStack

from infra.network_stack import NetworkStack

ENV = "dev"

AWS_REGION = "us-east-1"

BACKEND_S3_BUCKET = "blog.abdelfare.me"

BACKEND_S3_KEY = f"{ENV}/cdktf-samples"

class MyStack(TerraformStack):

def __init__(self, scope: Construct, id: str):

super().__init__(scope, id)

# define resources here

app = App()

MyStack(app, "aws-cdktf-samples-fargate")

network = NetworkStack(

app,

"network",

{

"region": AWS_REGION,

"backend_bucket": BACKEND_S3_BUCKET,

"backend_key_prefix": BACKEND_S3_KEY,

},

)

app.synth()

Tambah kod berikut untuk mencipta semua sumber infrastruktur:

$ cdktf synth

Edit fail main.py:

$ cdktf deploy network

Kerahkan infra tindanan dengan ini:

$ cd infra && touch infra_stack.py

Perhatikan nama DNS ALB, kami akan menggunakannya kemudian.

Tambah fail service_stack.py pada projek anda

from constructs import Construct

from cdktf import S3Backend, TerraformStack

from cdktf_cdktf_provider_aws.provider import AwsProvider

from cdktf_cdktf_provider_aws.ecs_cluster import EcsCluster

from cdktf_cdktf_provider_aws.lb import Lb

from cdktf_cdktf_provider_aws.lb_listener import (

LbListener,

LbListenerDefaultAction,

LbListenerDefaultActionFixedResponse,

)

from cdktf_cdktf_provider_aws.security_group import (

SecurityGroup,

SecurityGroupIngress,

SecurityGroupEgress,

)

class InfraStack(TerraformStack):

def __init__(self, scope: Construct, ns: str, network: dict, params: dict):

super().__init__(scope, ns)

self.region = params["region"]

# Configure the AWS provider to use the us-east-1 region

AwsProvider(self, "AWS", region=self.region)

# use S3 as backend

S3Backend(

self,

bucket=params["backend_bucket"],

key=params["backend_key_prefix"] + "/load_balancer.tfstate",

region=self.region,

)

# create the ALB security group

alb_sg = SecurityGroup(

self,

"alb-sg",

vpc_id=network["vpc_id"],

ingress=[

SecurityGroupIngress(

protocol="tcp", from_port=80, to_port=80, cidr_blocks=["0.0.0.0/0"]

)

],

egress=[

SecurityGroupEgress(

protocol="-1", from_port=0, to_port=0, cidr_blocks=["0.0.0.0/0"]

)

],

)

# create the ALB

alb = Lb(

self,

"alb",

internal=False,

load_balancer_type="application",

security_groups=[alb_sg.id],

subnets=network["public_subnets"],

)

# create the LB Listener

alb_listener = LbListener(

self,

"alb-listener",

load_balancer_arn=alb.arn,

port=80,

protocol="HTTP",

default_action=[

LbListenerDefaultAction(

type="fixed-response",

fixed_response=LbListenerDefaultActionFixedResponse(

content_type="text/plain",

status_code="404",

message_body="Could not find the resource you are looking for",

),

)

],

)

# create the ECS cluster

cluster = EcsCluster(self, "cluster", name=params["cluster_name"])

self.alb_arn = alb.arn

self.alb_listener = alb_listener.arn

self.alb_sg = alb_sg.id

self.cluster_id = cluster.id

Tambahkan kod berikut untuk mencipta semua sumber Perkhidmatan ECS:

...

CLUSTER_NAME = "cdktf-samples"

...

infra = InfraStack(

app,

"infra",

{

"vpc_id": network.vpc_id,

"public_subnets": network.public_subnets,

},

{

"region": AWS_REGION,

"backend_bucket": BACKEND_S3_BUCKET,

"backend_key_prefix": BACKEND_S3_KEY,

"cluster_name": CLUSTER_NAME,

},

)

...

Kemas kini main.py (untuk kali terakhir ?):

$ cdktf deploy network infra

Letakkan tindanan perkhidmatan dengan ini:

$ mkdir apps $ cd apps && touch service_stack.py

Sini kita pergi!

Kami berjaya mencipta semua sumber untuk menggunakan perkhidmatan baharu pada AWS ECS Fargate.

Jalankan yang berikut untuk mendapatkan senarai tindanan anda

from constructs import Construct

import json

from cdktf import S3Backend, TerraformStack, Token, TerraformOutput

from cdktf_cdktf_provider_aws.provider import AwsProvider

from cdktf_cdktf_provider_aws.ecs_service import (

EcsService,

EcsServiceLoadBalancer,

EcsServiceNetworkConfiguration,

)

from cdktf_cdktf_provider_aws.ecr_repository import (

EcrRepository,

EcrRepositoryImageScanningConfiguration,

)

from cdktf_cdktf_provider_aws.ecr_lifecycle_policy import EcrLifecyclePolicy

from cdktf_cdktf_provider_aws.ecs_task_definition import (

EcsTaskDefinition,

)

from cdktf_cdktf_provider_aws.lb_listener_rule import (

LbListenerRule,

LbListenerRuleAction,

LbListenerRuleCondition,

LbListenerRuleConditionPathPattern,

)

from cdktf_cdktf_provider_aws.lb_target_group import (

LbTargetGroup,

LbTargetGroupHealthCheck,

)

from cdktf_cdktf_provider_aws.security_group import (

SecurityGroup,

SecurityGroupIngress,

SecurityGroupEgress,

)

from cdktf_cdktf_provider_aws.cloudwatch_log_group import CloudwatchLogGroup

from cdktf_cdktf_provider_aws.data_aws_iam_policy_document import (

DataAwsIamPolicyDocument,

)

from cdktf_cdktf_provider_aws.iam_role import IamRole

from cdktf_cdktf_provider_aws.iam_role_policy_attachment import IamRolePolicyAttachment

class ServiceStack(TerraformStack):

def __init__(

self, scope: Construct, ns: str, network: dict, infra: dict, params: dict

):

super().__init__(scope, ns)

self.region = params["region"]

# Configure the AWS provider to use the us-east-1 region

AwsProvider(self, "AWS", region=self.region)

# use S3 as backend

S3Backend(

self,

bucket=params["backend_bucket"],

key=params["backend_key_prefix"] + "/" + params["app_name"] + ".tfstate",

region=self.region,

)

# create the service security group

svc_sg = SecurityGroup(

self,

"svc-sg",

vpc_id=network["vpc_id"],

ingress=[

SecurityGroupIngress(

protocol="tcp",

from_port=params["app_port"],

to_port=params["app_port"],

security_groups=[infra["alb_sg"]],

)

],

egress=[

SecurityGroupEgress(

protocol="-1", from_port=0, to_port=0, cidr_blocks=["0.0.0.0/0"]

)

],

)

# create the service target group

svc_tg = LbTargetGroup(

self,

"svc-target-group",

name="svc-tg",

port=params["app_port"],

protocol="HTTP",

vpc_id=network["vpc_id"],

target_type="ip",

health_check=LbTargetGroupHealthCheck(path="/ping", matcher="200"),

)

# create the service listener rule

LbListenerRule(

self,

"alb-rule",

listener_arn=infra["alb_listener"],

action=[LbListenerRuleAction(type="forward", target_group_arn=svc_tg.arn)],

condition=[

LbListenerRuleCondition(

path_pattern=LbListenerRuleConditionPathPattern(values=["/*"])

)

],

)

# create the ECR repository

repo = EcrRepository(

self,

params["app_name"],

image_scanning_configuration=EcrRepositoryImageScanningConfiguration(

scan_on_push=True

),

image_tag_mutability="MUTABLE",

name=params["app_name"],

)

EcrLifecyclePolicy(

self,

"this",

repository=repo.name,

policy=json.dumps(

{

"rules": [

{

"rulePriority": 1,

"description": "Keep last 10 images",

"selection": {

"tagStatus": "tagged",

"tagPrefixList": ["v"],

"countType": "imageCountMoreThan",

"countNumber": 10,

},

"action": {"type": "expire"},

},

{

"rulePriority": 2,

"description": "Expire images older than 3 days",

"selection": {

"tagStatus": "untagged",

"countType": "sinceImagePushed",

"countUnit": "days",

"countNumber": 3,

},

"action": {"type": "expire"},

},

]

}

),

)

# create the service log group

service_log_group = CloudwatchLogGroup(

self,

"svc_log_group",

name=params["app_name"],

retention_in_days=1,

)

ecs_assume_role = DataAwsIamPolicyDocument(

self,

"assume_role",

statement=[

{

"actions": ["sts:AssumeRole"],

"principals": [

{

"identifiers": ["ecs-tasks.amazonaws.com"],

"type": "Service",

},

],

},

],

)

# create the service execution role

service_execution_role = IamRole(

self,

"service_execution_role",

assume_role_policy=ecs_assume_role.json,

name=params["app_name"] + "-exec-role",

)

IamRolePolicyAttachment(

self,

"ecs_role_policy",

policy_arn="arn:aws:iam::aws:policy/service-role/AmazonECSTaskExecutionRolePolicy",

role=service_execution_role.name,

)

# create the service task role

service_task_role = IamRole(

self,

"service_task_role",

assume_role_policy=ecs_assume_role.json,

name=params["app_name"] + "-task-role",

)

# create the service task definition

task = EcsTaskDefinition(

self,

"svc-task",

family="service",

network_mode="awsvpc",

requires_compatibilities=["FARGATE"],

cpu="256",

memory="512",

task_role_arn=service_task_role.arn,

execution_role_arn=service_execution_role.arn,

container_definitions=json.dumps(

[

{

"name": "svc",

"image": f"{repo.repository_url}:latest",

"networkMode": "awsvpc",

"healthCheck": {

"Command": ["CMD-SHELL", "echo hello"],

"Interval": 5,

"Timeout": 2,

"Retries": 3,

},

"portMappings": [

{

"containerPort": params["app_port"],

"hostPort": params["app_port"],

}

],

"logConfiguration": {

"logDriver": "awslogs",

"options": {

"awslogs-group": service_log_group.name,

"awslogs-region": params["region"],

"awslogs-stream-prefix": params["app_name"],

},

},

}

]

),

)

# create the ECS service

EcsService(

self,

"ecs_service",

name=params["app_name"] + "-service",

cluster=infra["cluster_id"],

task_definition=task.arn,

desired_count=params["desired_count"],

launch_type="FARGATE",

force_new_deployment=True,

network_configuration=EcsServiceNetworkConfiguration(

subnets=network["private_subnets"],

security_groups=[svc_sg.id],

),

load_balancer=[

EcsServiceLoadBalancer(

target_group_arn=svc_tg.id,

container_name="svc",

container_port=params["app_port"],

)

],

)

TerraformOutput(

self,

"ecr_repository_url",

description="url of the ecr repo",

value=repo.repository_url,

)

Untuk mengautomasikan penyebaran, mari kita mengintegrasikan aliran kerja GitHub ke java-api kami . Setelah mengaktifkan tindakan GitHub, menetapkan rahsia dan pembolehubah untuk repositori anda, buat fail .github/aliran kerja/deploy.yml dan tambahkan kandungan di bawah:

FROM maven:3.9-amazoncorretto-21 AS builder WORKDIR /app COPY pom.xml . COPY src src RUN mvn clean package # amazon java distribution FROM amazoncorretto:21-alpine COPY --from=builder /app/target/*.jar /app/java-api.jar EXPOSE 8080 ENTRYPOINT ["java","-jar","/app/java-api.jar"]

aliran kerja kami berfungsi dengan baik:

Langkah 5: Mengesahkan penggunaannya

Langkah 5: Mengesahkan penggunaannya

):

- [**python (3.13)**](https://www.python.org/) - [**pipenv**](https://pipenv.pypa.io/en/latest/) - [**npm**](https://nodejs.org/en/)

Pemikiran Akhir

Walaupun projek CDKTF menawarkan banyak ciri menarik untuk pengurusan infrastruktur, saya harus mengakui bahawa saya mendapati ia agak terlalu jelas pada masa -masa.

Adakah anda mempunyai pengalaman dengan CDKTF? Adakah anda menggunakannya dalam pengeluaran?

Jangan ragu untuk berkongsi pengalaman anda dengan kami.

Atas ialah kandungan terperinci Bagaimana untuk menggunakan SpringBoot API pada AWS ECS menggunakan CDKTF?. Untuk maklumat lanjut, sila ikut artikel berkaitan lain di laman web China PHP!