Adding Filter in Hadoop Mapper Class

There is my solutions to tackle the disk spaces shortage problem I described in the previous post. The core principle of the solution is to reduce the number of output records at Mapper stage; the method I used is Filter , adding a filter,

There is my solutions to tackle the disk spaces shortage problem I described in the previous post. The core principle of the solution is to reduce the number of output records at Mapper stage; the method I used is Filter, adding a filter, which I will explain later, to decrease the output records of Mapper, which in turn significantly decrease the Mapper’s Spill records, and fundamentally decrease the disk space usages. After applying the filter, with 30,661 records. some 200MB data set as inputs, the total Spill Records is 25,471,725, and it only takes about 509MB disk spaces!

Followed Filter

And now I’m going to reveal what’s kinda Filter it looks like, and how did I accomplish that filter. The true face of the FILTER is called Followed Filter, it filters users from computing co-followed combinations if their followed number does not satisfy a certain number, called Followed Threshold.

Followed Filter is used to reduce the co-followed combinations at Mapper stage. Say we set the followed threshold to 100, meaning users who doesn’t own 100 fans(be followed by 100 other users) will be ignored during co-followed combinations computing stage(to get the actual number of the threshold we need analyze statistics of user’s followed number of our data set).

Reason

Choosing followed filter is reasonable because how many user follows is a metric of user’s popularity/famousness.

HOW

In order to accomplish it, we need:

First, counting user’s followed number among our data set, which needs a new MapReduce Job;

Second, choosing a followed threshold after analyze the statistics perspective of followed number data set got in first step;

Third, using DistrbutedCache of Hadoop to cache users who satisfy the filter to all Mappers;

Forth, adding followed filter to Mapper class, only users satisfy filter condition will be passed into co-followed combination computing phrase;

Fifth, adding co-followed filter/threshold in Reducer side if necessary.

Outcomes

Here is the Hadoop Job Summary, after applying the followed filter with followed threshold of 1000, that means only users who are followed by 1000 users will have the opportunity to co-followed combinations, compared with the Job Summary in my previous post, most all metrics have significant improvements:

| Counter | Map | Reduce | Total |

| Bytes Written | 0 | 1,798,185 | 1,798,185 |

| Bytes Read | 203,401,876 | 0 | 203,401,876 |

| FILE_BYTES_READ | 405,219,906 | 52,107,486 | 457,327,392 |

| HDFS_BYTES_READ | 203,402,751 | 0 | 203,402,751 |

| FILE_BYTES_WRITTEN | 457,707,759 | 52,161,704 | 509,869,463 |

| HDFS_BYTES_WRITTEN | 0 | 1,798,185 | 1,798,185 |

| Reduce input groups | 0 | 373,680 | 373,680 |

| Map output materialized bytes | 52,107,522 | 0 | 52,107,522 |

| Combine output records | 22,202,756 | 0 | 22,202,756 |

| Map input records | 30,661 | 0 | 30,661 |

| Reduce shuffle bytes | 0 | 52,107,522 | 52,107,522 |

| Physical memory (bytes) snapshot | 2,646,589,440 | 116,408,320 | 2,762,997,760 |

| Reduce output records | 0 | 373,680 | 373,680 |

| Spilled Records | 22,866,351 | 2,605,374 | 25,471,725 |

| Map output bytes | 2,115,139,050 | 0 | 2,115,139,050 |

| Total committed heap usage (bytes) | 2,813,853,696 | 84,738,048 | 2,898,591,744 |

| CPU time spent (ms) | 5,766,680 | 11,210 | 5,777,890 |

| Virtual memory (bytes) snapshot | 9,600,737,280 | 1,375,002,624 | 10,975,739,904 |

| SPLIT_RAW_BYTES | 875 | 0 | 875 |

| Map output records | 117,507,725 | 0 | 117,507,725 |

| Combine input records | 137,105,107 | 0 | 137,105,107 |

| Reduce input records | 0 | 2,605,374 | 2,605,374 |

P.S.

Frankly Speaking, chances are I am on the wrong way to Hadoop Programming, since I’m palying Pesudo Distribution Hadoop with my personal computer, which has 4 CUPs and 4G RAM, in real Hadoop Cluster disk spaces might never be a trouble, and all the tuning work I have done may turn into meaningless efforts. Before the Followed Filter, I also did some Hadoop tuning like customed Writable class, RawComparator, block size and io.sort.mb, etc.

---EOF---

原文地址:Adding Filter in Hadoop Mapper Class, 感谢原作者分享。

熱AI工具

Undresser.AI Undress

人工智慧驅動的應用程序,用於創建逼真的裸體照片

AI Clothes Remover

用於從照片中去除衣服的線上人工智慧工具。

Undress AI Tool

免費脫衣圖片

Clothoff.io

AI脫衣器

Video Face Swap

使用我們完全免費的人工智慧換臉工具,輕鬆在任何影片中換臉!

熱門文章

熱工具

記事本++7.3.1

好用且免費的程式碼編輯器

SublimeText3漢化版

中文版,非常好用

禪工作室 13.0.1

強大的PHP整合開發環境

Dreamweaver CS6

視覺化網頁開發工具

SublimeText3 Mac版

神級程式碼編輯軟體(SublimeText3)

Java錯誤:Hadoop錯誤,如何處理與避免

Jun 24, 2023 pm 01:06 PM

Java錯誤:Hadoop錯誤,如何處理與避免

Jun 24, 2023 pm 01:06 PM

Java錯誤:Hadoop錯誤,如何處理和避免使用Hadoop處理大數據時,常常會遇到一些Java異常錯誤,這些錯誤可能會影響任務的執行,導致資料處理失敗。本文將介紹一些常見的Hadoop錯誤,並提供處理和避免這些錯誤的方法。 Java.lang.OutOfMemoryErrorOutOfMemoryError是Java虛擬機器記憶體不足的錯誤。當Hadoop任

idea springBoot專案自動注入mapper為空報錯誤如何解決

May 17, 2023 pm 06:49 PM

idea springBoot專案自動注入mapper為空報錯誤如何解決

May 17, 2023 pm 06:49 PM

在SpringBoot專案中,如果使用了MyBatis作為持久層框架,使用自動注入時可能會遇到mapper報空指標異常的問題。這是因為在自動注入時,SpringBoot無法正確識別MyBatis的Mapper接口,需要進行一些額外的配置。解決這個問題的方法有兩種:1.在Mapper介面上加入註解在Mapper介面上加入@Mapper註解,告訴SpringBoot這個介面是Mapper接口,需要進行代理。範例如下:@MapperpublicinterfaceUserMapper{//...}2

![解決「[Vue warn]: Failed to resolve filter」錯誤的方法](https://img.php.cn/upload/article/000/887/227/169243040583797.jpg?x-oss-process=image/resize,m_fill,h_207,w_330) 解決「[Vue warn]: Failed to resolve filter」錯誤的方法

Aug 19, 2023 pm 03:33 PM

解決「[Vue warn]: Failed to resolve filter」錯誤的方法

Aug 19, 2023 pm 03:33 PM

解決「[Vuewarn]:Failedtoresolvefilter」錯誤的方法在使用Vue進行開發的過程中,我們有時會遇到一個錯誤提示:「[Vuewarn]:Failedtoresolvefilter」。這個錯誤提示通常出現在我們在模板中使用了一個未定義的過濾器的情況下。本文將介紹如何解決這個錯誤並給出相應的程式碼範例。當我們在Vue的

如何使用PHP和Hadoop進行大數據處理

Jun 19, 2023 pm 02:24 PM

如何使用PHP和Hadoop進行大數據處理

Jun 19, 2023 pm 02:24 PM

隨著資料量的不斷增大,傳統的資料處理方式已經無法處理大數據時代所帶來的挑戰。 Hadoop是開源的分散式運算框架,它透過分散式儲存和處理大量的數據,解決了單節點伺服器在大數據處理中帶來的效能瓶頸問題。 PHP是一種腳本語言,廣泛應用於Web開發,而且具有快速開發、易於維護等優點。本文將介紹如何使用PHP和Hadoop進行大數據處理。什麼是HadoopHadoop是

在Beego中使用Hadoop和HBase進行大數據儲存和查詢

Jun 22, 2023 am 10:21 AM

在Beego中使用Hadoop和HBase進行大數據儲存和查詢

Jun 22, 2023 am 10:21 AM

隨著大數據時代的到來,資料處理和儲存變得越來越重要,如何有效率地管理和分析大量的資料也成為企業面臨的挑戰。 Hadoop和HBase作為Apache基金會的兩個項目,為大數據儲存和分析提供了一個解決方案。本文將介紹如何在Beego中使用Hadoop和HBase進行大數據儲存和查詢。一、Hadoop和HBase簡介Hadoop是一個開源的分散式儲存和運算系統,它可

探索Java在大數據領域的應用:Hadoop、Spark、Kafka等技術堆疊的了解

Dec 26, 2023 pm 02:57 PM

探索Java在大數據領域的應用:Hadoop、Spark、Kafka等技術堆疊的了解

Dec 26, 2023 pm 02:57 PM

Java大數據技術堆疊:了解Java在大數據領域的應用,如Hadoop、Spark、Kafka等隨著資料量不斷增加,大數據技術成為了當今網路時代的熱門話題。在大數據領域,我們常聽到Hadoop、Spark、Kafka等技術的名字。這些技術起到了至關重要的作用,而Java作為一門廣泛應用的程式語言,也在大數據領域發揮著巨大的作用。本文將重點放在Java在大

springboot如何實作指定mybatis中mapper檔案掃描路徑

May 17, 2023 pm 10:25 PM

springboot如何實作指定mybatis中mapper檔案掃描路徑

May 17, 2023 pm 10:25 PM

指定mybatis中mapper檔案掃描路徑所有的mapper映射檔案mybatis.mapper-locations=classpath*:com/springboot/mapper/*.xml或resource下的mapper映射檔mybatis.mapper-locations=classpath*:mapper/** /*.xmlmybatis配置多個掃描路徑寫法百度得到,但是很亂,稍微整理下:最近拆項目,遇到個小問題,稍微記錄下:

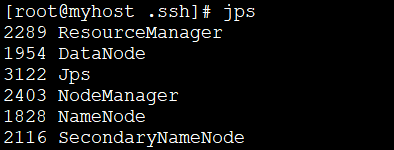

linux下安裝Hadoop的方法是什麼

May 18, 2023 pm 08:19 PM

linux下安裝Hadoop的方法是什麼

May 18, 2023 pm 08:19 PM

一:安裝JDK1.執行以下指令,下載JDK1.8安裝套件。 wget--no-check-certificatehttps://repo.huaweicloud.com/java/jdk/8u151-b12/jdk-8u151-linux-x64.tar.gz2.執行以下命令,解壓縮下載的JDK1.8安裝包。 tar-zxvfjdk-8u151-linux-x64.tar.gz3.移動並重新命名JDK包。 mvjdk1.8.0_151//usr/java84.配置Java環境變數。 echo'