Hadoop集群间的hbase数据迁移

在日常的使用过程中,可能经常需要将一个集群中hbase的数据迁移到或者拷贝到另外一个集群中,这时候,可能会出很多问题 以下是我在处理的过程中的一些做法和处理方式。 前提,两个hbase的版本一直,否则可能出现不可预知的问题,造成数据迁移失败 当两个集群

在日常的使用过程中,可能经常需要将一个集群中hbase的数据迁移到或者拷贝到另外一个集群中,这时候,可能会出很多问题以下是我在处理的过程中的一些做法和处理方式。

前提,两个hbase的版本一直,否则可能出现不可预知的问题,造成数据迁移失败

当两个集群不能通讯的时候,可以先将数据所在集群中hbase的数据文件拷贝到本地

具体做法如下:

在Hadoop目录下执行如下命令,拷贝到本地文件。

bin/Hadoop fs -copyToLocal /hbase/tab_keywordflow /home/test/xiaochenbak

然后你懂得,将文件拷贝到你需要的你需要迁移到的那个集群中,目录是你的表的目录,

如果这个集群中也有对应的表文件,那么删除掉,然后拷贝。

/bin/Hadoop fs -rmr /hbase/tab_keywordflow

/bin/Hadoop fs -copyFromLocal /home/other/xiaochenbak /hbase/tab_keywordflow

此时的/home/other/xiaochenbak为你要迁移到数据的集群。

重置该表在.META.表中的分区信息

bin/hbase org.jruby.Main /home/other/hbase/bin/add_table.rb /hbase/tab_keywordflow

/home/other/hbase/bin/add_table.rb为ruby脚本,可以执行,脚本内容如下:另存为add_table.rb即可

#

# Copyright 2009 The Apache Software Foundation

#

# Licensed to the Apache Software Foundation (ASF) under one

# or more contributor license agreements. See the NOTICE file

# distributed with this work for additional information

# regarding copyright ownership. The ASF licenses this file

# to you under the Apache License, Version 2.0 (the

# "License"); you may not use this file except in compliance

# with the License. You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

#

# Script adds a table back to a running hbase.

# Currently only works on if table data is in place.

#

# To see usage for this script, run:

#

# ${HBASE_HOME}/bin/hbase org.jruby.Main addtable.rb

#

include Java

import org.apache.Hadoop.hbase.util.Bytes

import org.apache.Hadoop.hbase.HConstants

import org.apache.Hadoop.hbase.regionserver.HRegion

import org.apache.Hadoop.hbase.HRegionInfo

import org.apache.Hadoop.hbase.client.HTable

import org.apache.Hadoop.hbase.client.Delete

import org.apache.Hadoop.hbase.client.Put

import org.apache.Hadoop.hbase.client.Scan

import org.apache.Hadoop.hbase.HTableDescriptor

import org.apache.Hadoop.hbase.HBaseConfiguration

import org.apache.Hadoop.hbase.util.FSUtils

import org.apache.Hadoop.hbase.util.Writables

import org.apache.Hadoop.fs.Path

import org.apache.Hadoop.fs.FileSystem

import org.apache.commons.logging.LogFactory

# Name of this script

NAME = "add_table"

# Print usage for this script

def usage

puts 'Usage: %s.rb TABLE_DIR [alternate_tablename]' % NAME

exit!

end

# Get configuration to use.

c = HBaseConfiguration.new()

# Set Hadoop filesystem configuration using the hbase.rootdir.

# Otherwise, we'll always use localhost though the hbase.rootdir

# might be pointing at hdfs location.

c.set("fs.default.name", c.get(HConstants::HBASE_DIR))

fs = FileSystem.get(c)

# Get a logger and a metautils instance.

LOG = LogFactory.getLog(NAME)

# Check arguments

if ARGV.size 2

usage

end

# Get cmdline args.

srcdir = fs.makeQualified(Path.new(java.lang.String.new(ARGV[0])))

if not fs.exists(srcdir)

raise IOError.new("src dir " + srcdir.toString() + " doesn't exist!")

end

# Get table name

tableName = nil

if ARGV.size > 1

tableName = ARGV[1]

raise IOError.new("Not supported yet")

elsif

# If none provided use dirname

tableName = srcdir.getName()

end

HTableDescriptor.isLegalTableName(tableName.to_java_bytes)

# Figure locations under hbase.rootdir

# Move directories into place; be careful not to overwrite.

rootdir = FSUtils.getRootDir(c)

tableDir = fs.makeQualified(Path.new(rootdir, tableName))

# If a directory currently in place, move it aside.

if srcdir.equals(tableDir)

LOG.info("Source directory is in place under hbase.rootdir: " + srcdir.toString());

elsif fs.exists(tableDir)

movedTableName = tableName + "." + java.lang.System.currentTimeMillis().to_s

movedTableDir = Path.new(rootdir, java.lang.String.new(movedTableName))

LOG.warn("Moving " + tableDir.toString() + " aside as " + movedTableDir.toString());

raise IOError.new("Failed move of " + tableDir.toString()) unless fs.rename(tableDir, movedTableDir)

LOG.info("Moving " + srcdir.toString() + " to " + tableDir.toString());

raise IOError.new("Failed move of " + srcdir.toString()) unless fs.rename(srcdir, tableDir)

end

# Clean mentions of table from .META.

# Scan the .META. and remove all lines that begin with tablename

LOG.info("Deleting mention of " + tableName + " from .META.")

metaTable = HTable.new(c, HConstants::META_TABLE_NAME)

tableNameMetaPrefix = tableName + HConstants::META_ROW_DELIMITER.chr

scan = Scan.new((tableNameMetaPrefix + HConstants::META_ROW_DELIMITER.chr).to_java_bytes)

scanner = metaTable.getScanner(scan)

# Use java.lang.String doing compares. Ruby String is a bit odd.

tableNameStr = java.lang.String.new(tableName)

while (result = scanner.next())

rowid = Bytes.toString(result.getRow())

rowidStr = java.lang.String.new(rowid)

if not rowidStr.startsWith(tableNameMetaPrefix)

# Gone too far, break

break

end

LOG.info("Deleting row from catalog: " + rowid);

d = Delete.new(result.getRow())

metaTable.delete(d)

end

scanner.close()

# Now, walk the table and per region, add an entry

LOG.info("Walking " + srcdir.toString() + " adding regions to catalog table")

statuses = fs.listStatus(srcdir)

for status in statuses

next unless status.isDir()

next if status.getPath().getName() == "compaction.dir"

regioninfofile = Path.new(status.getPath(), HRegion::REGIONINFO_FILE)

unless fs.exists(regioninfofile)

LOG.warn("Missing .regioninfo: " + regioninfofile.toString())

next

end

is = fs.open(regioninfofile)

hri = HRegionInfo.new()

hri.readFields(is)

is.close()

# TODO: Need to redo table descriptor with passed table name and then recalculate the region encoded names.

p = Put.new(hri.getRegionName())

p.add(HConstants::CATALOG_FAMILY, HConstants::REGIONINFO_QUALIFIER, Writables.getBytes(hri))

metaTable.put(p)

LOG.info("Added to catalog: " + hri.toString())

end

好了,以上就是我的做法,如何集群键可以通信,那就更好办了,相信你懂得,scp

熱AI工具

Undresser.AI Undress

人工智慧驅動的應用程序,用於創建逼真的裸體照片

AI Clothes Remover

用於從照片中去除衣服的線上人工智慧工具。

Undress AI Tool

免費脫衣圖片

Clothoff.io

AI脫衣器

Video Face Swap

使用我們完全免費的人工智慧換臉工具,輕鬆在任何影片中換臉!

熱門文章

熱工具

記事本++7.3.1

好用且免費的程式碼編輯器

SublimeText3漢化版

中文版,非常好用

禪工作室 13.0.1

強大的PHP整合開發環境

Dreamweaver CS6

視覺化網頁開發工具

SublimeText3 Mac版

神級程式碼編輯軟體(SublimeText3)

開源!超越ZoeDepth! DepthFM:快速且精確的單目深度估計!

Apr 03, 2024 pm 12:04 PM

開源!超越ZoeDepth! DepthFM:快速且精確的單目深度估計!

Apr 03, 2024 pm 12:04 PM

0.這篇文章乾了啥?提出了DepthFM:一個多功能且快速的最先進的生成式單目深度估計模型。除了傳統的深度估計任務外,DepthFM還展示了在深度修復等下游任務中的最先進能力。 DepthFM效率高,可以在少數推理步驟內合成深度圖。以下一起來閱讀這項工作~1.論文資訊標題:DepthFM:FastMonocularDepthEstimationwithFlowMatching作者:MingGui,JohannesS.Fischer,UlrichPrestel,PingchuanMa,Dmytr

使用ddrescue在Linux上恢復數據

Mar 20, 2024 pm 01:37 PM

使用ddrescue在Linux上恢復數據

Mar 20, 2024 pm 01:37 PM

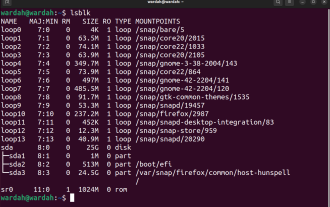

DDREASE是一種用於從檔案或區塊裝置(如硬碟、SSD、RAM磁碟、CD、DVD和USB儲存裝置)復原資料的工具。它將資料從一個區塊設備複製到另一個區塊設備,留下損壞的資料區塊,只移動好的資料區塊。 ddreasue是一種強大的恢復工具,完全自動化,因為它在恢復操作期間不需要任何干擾。此外,由於有了ddasue地圖文件,它可以隨時停止和恢復。 DDREASE的其他主要功能如下:它不會覆寫恢復的數據,但會在迭代恢復的情況下填補空白。但是,如果指示工具明確執行此操作,則可以將其截斷。將資料從多個檔案或區塊還原到單

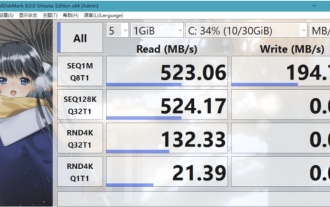

crystaldiskmark是什麼軟體? -crystaldiskmark如何使用?

Mar 18, 2024 pm 02:58 PM

crystaldiskmark是什麼軟體? -crystaldiskmark如何使用?

Mar 18, 2024 pm 02:58 PM

CrystalDiskMark是一款適用於硬碟的小型HDD基準測試工具,可快速測量順序和隨機讀取/寫入速度。接下來就讓小編為大家介紹一下CrystalDiskMark,以及crystaldiskmark如何使用吧~一、CrystalDiskMark介紹CrystalDiskMark是一款廣泛使用的磁碟效能測試工具,用於評估機械硬碟和固態硬碟(SSD)的讀取和寫入速度和隨機I/O性能。它是一款免費的Windows應用程序,並提供用戶友好的介面和各種測試模式來評估硬碟效能的不同方面,並被廣泛用於硬體評

foobar2000怎麼下載? -foobar2000怎麼使用

Mar 18, 2024 am 10:58 AM

foobar2000怎麼下載? -foobar2000怎麼使用

Mar 18, 2024 am 10:58 AM

foobar2000是一款能隨時收聽音樂資源的軟體,各種音樂無損音質帶給你,增強版本的音樂播放器,讓你得到更全更舒適的音樂體驗,它的設計理念是將電腦端的高級音頻播放器移植到手機上,提供更便捷高效的音樂播放體驗,介面設計簡潔明了易於使用它採用了極簡的設計風格,沒有過多的裝飾和繁瑣的操作能夠快速上手,同時還支持多種皮膚和主題,根據自己的喜好進行個性化設置,打造專屬的音樂播放器支援多種音訊格式的播放,它還支援音訊增益功能根據自己的聽力情況調整音量大小,避免過大的音量對聽力造成損害。接下來就讓小編為大

Google狂喜:JAX性能超越Pytorch、TensorFlow!或成GPU推理訓練最快選擇

Apr 01, 2024 pm 07:46 PM

Google狂喜:JAX性能超越Pytorch、TensorFlow!或成GPU推理訓練最快選擇

Apr 01, 2024 pm 07:46 PM

谷歌力推的JAX在最近的基準測試中表現已經超過Pytorch和TensorFlow,7項指標排名第一。而且測試並不是JAX性能表現最好的TPU上完成的。雖然現在在開發者中,Pytorch依然比Tensorflow更受歡迎。但未來,也許有更多的大型模型會基於JAX平台進行訓練和運行。模型最近,Keras團隊為三個後端(TensorFlow、JAX、PyTorch)與原生PyTorch實作以及搭配TensorFlow的Keras2進行了基準測試。首先,他們為生成式和非生成式人工智慧任務選擇了一組主流

微信聊天記錄怎麼移轉到新手機

Mar 26, 2024 pm 04:48 PM

微信聊天記錄怎麼移轉到新手機

Mar 26, 2024 pm 04:48 PM

1.在舊裝置上開啟微信app,點選右下角的【我】,選擇【設定】功能,點選【聊天】。 2.選擇【聊天記錄遷移與備份】,點選【遷移】,選擇要遷移設備的平台。 3.點選【擇需要遷移的聊天】,點選左下角的【全選】或自主選擇聊天記錄。 4.選擇完畢後,點選右下角的【開始】,使用新裝置登入此微信帳號。 5.然後掃描該二維碼即可開始遷移聊天記錄,用戶只需等待遷移完成即可。

BTCC教學:如何在BTCC交易所綁定使用MetaMask錢包?

Apr 26, 2024 am 09:40 AM

BTCC教學:如何在BTCC交易所綁定使用MetaMask錢包?

Apr 26, 2024 am 09:40 AM

MetaMask(中文也叫小狐狸錢包)是一款免費的、廣受好評的加密錢包軟體。目前,BTCC已支援綁定MetaMask錢包,綁定後可使用MetaMask錢包進行快速登錄,儲值、買幣等,且首次綁定還可獲得20USDT體驗金。在BTCCMetaMask錢包教學中,我們將詳細介紹如何註冊和使用MetaMask,以及如何在BTCC綁定並使用小狐狸錢包。 MetaMask錢包是什麼? MetaMask小狐狸錢包擁有超過3,000萬用戶,是當今最受歡迎的加密貨幣錢包之一。它可免費使用,可作為擴充功能安裝在網絡

網易信箱大師怎麼用

Mar 27, 2024 pm 05:32 PM

網易信箱大師怎麼用

Mar 27, 2024 pm 05:32 PM

網易郵箱,作為中國網友廣泛使用的一種電子郵箱,一直以來以其穩定、高效的服務贏得了用戶的信賴。而網易信箱大師,則是專為手機使用者打造的信箱軟體,它大大簡化了郵件的收發流程,讓我們的郵件處理變得更加便利。那麼網易信箱大師該如何使用,具體又有哪些功能呢,下文中本站小編將為大家帶來詳細的內容介紹,希望能幫助到大家!首先,您可以在手機應用程式商店搜尋並下載網易信箱大師應用程式。在應用寶或百度手機助手中搜尋“網易郵箱大師”,然後按照提示進行安裝即可。下載安裝完成後,我們打開網易郵箱帳號並進行登錄,登入介面如下圖所示