Python內嵌的集合類型有list、tuple、set、dict。

列表list:看似陣列,但比陣列強大,支援索引、切片、尋找、增加等功能。

元組tuple:功能跟list差不多,但一旦生成,長度及元素都不可變(元素的元素還是可變),似乎就是一更輕量級、安全的list。

字典dict:鍵值對結構雜湊表,跟雜湊表的性質一樣,key無序且不重複,增刪改方便快速。

set:無序且不重複的集合,就是一個只有鍵沒有值的dict,Java的HashSet就是採用HashMap實現,但願python不會是這樣,畢竟set不需要value,省去了很多指針。

Generator:

稱為產生器,或是清單推導式,是python中有一個特殊的資料型別,其實並不是資料結構,只包含演算法和暫存的狀態,並且具有迭代的功能。

先看看它們的記憶體使用情況,分別用產生器產生100000個元素的set, dict, generator, tuple, list。消耗的記憶體dict, set, list, tuple依序減少,產生的物件大小也是一樣。由於generator不會產生資料表,所以不需要消耗記憶體:

import sys

from memory_profiler import profile

@profile

def create_data(data_size):

data_generator = (x for x in xrange(data_size))

data_set = {x for x in xrange(data_size)}

data_dict = {x:None for x in xrange(data_size)}

data_tuple = tuple(x for x in xrange(data_size))

data_list = [x for x in xrange(data_size)]

return data_set, data_dict, data_generator, data_tuple, data_list

data_size = 100000

for data in create_data(data_size):

print data.__class__, sys.getsizeof(data)

Line # Mem usage Increment Line Contents

================================================

14.6 MiB 0.0 MiB @profile

def create_data(data_size):

14.7 MiB 0.0 MiB data_generator = (x for x in xrange(data_size))

21.4 MiB 6.7 MiB data_set = {x for x in xrange(data_size)}

29.8 MiB 8.5 MiB data_dict = {x:None for x in xrange(data_size)}

33.4 MiB 3.6 MiB data_tuple = tuple(x for x in xrange(data_size))

38.2 MiB 4.8 MiB data_list = [x for x in xrange(data_size)]

38.2 MiB 0.0 MiB return data_set, data_dict, data_generator, data_tuple, data_list

<type 'set'> 4194528

<type 'dict'> 6291728

<type 'generator'> 72

<type 'tuple'> 800048

<type 'list'> 824464再看看查找效能,dict,set是常數查找時間(O(1)),list、tuple是線性查找時間(O (n)),用生成器產生指定大小元素的對象,用隨機產生的數字去找:

import time

import sys

import random

from memory_profiler import profile

def create_data(data_size):

data_set = {x for x in xrange(data_size)}

data_dict = {x:None for x in xrange(data_size)}

data_tuple = tuple(x for x in xrange(data_size))

data_list = [x for x in xrange(data_size)]

return data_set, data_dict, data_tuple, data_list

def cost_time(func):

def cost(*args, **kwargs):

start = time.time()

r = func(*args, **kwargs)

cost = time.time() - start

print 'find in %s cost time %s' % (r, cost)

return r, cost #返回数据的类型和方法执行消耗的时间

return cost

@cost_time

def test_find(test_data, data):

for d in test_data:

if d in data:

pass

return data.__class__.__name__

data_size = 100

test_size = 10000000

test_data = [random.randint(0, data_size) for x in xrange(test_size)]

#print test_data

for data in create_data(data_size):

test_find(test_data, data)

输出:

----------------------------------------------

find in <type 'set'> cost time 0.47200012207

find in <type 'dict'> cost time 0.429999828339

find in <type 'tuple'> cost time 5.36500000954

find in <type 'list'> cost time 5.53399991989100個元素的大小的集合,分別找出1000W次,差距非常明顯。不過這些隨機數,都是能在集合中找到。修改一下隨機數方式,產生一半是能查找得到,一半是查找不到的。從列印訊息可以看出在有一半最壞查找例子的情況下,list、tuple表現得更差了。

def randint(index, data_size):

return random.randint(0, data_size) if (x % 2) == 0 else random.randint(data_size, data_size * 2)

test_data = [randint(x, data_size) for x in xrange(test_size)]

输出:

----------------------------------------------

find in <type 'set'> cost time 0.450000047684

find in <type 'dict'> cost time 0.397000074387

find in <type 'tuple'> cost time 7.83299994469

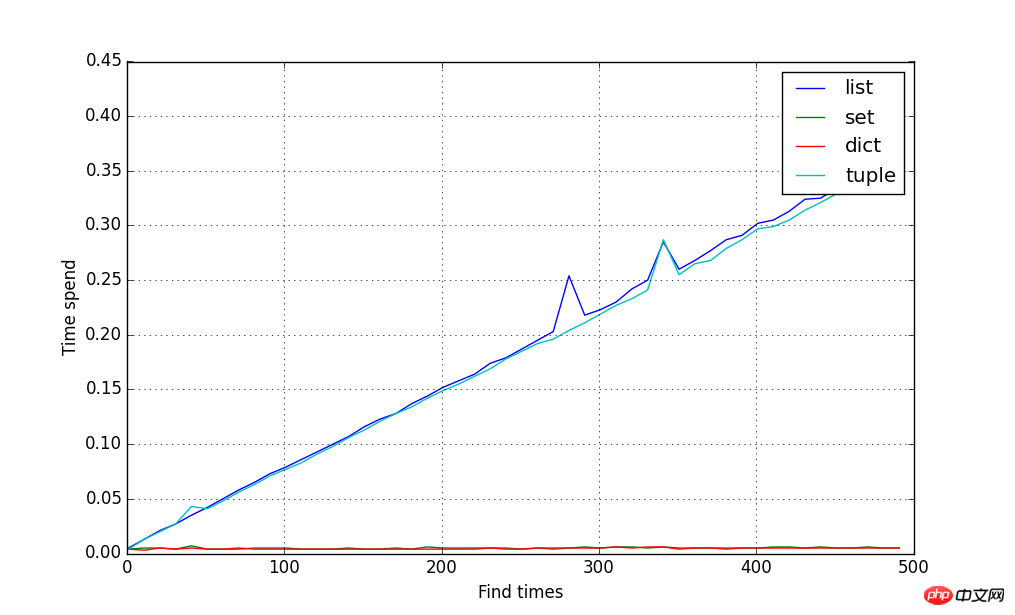

find in <type 'list'> cost time 8.27800011635元素的數量從10增長到500,統計每次查找10W次的時間,用圖擬合時間消耗的曲線,結果如下圖,結果證明dict, set不管元素多少,一直都是常數查找時間,dict、tuple隨著元素增長,呈現線性增長時間:

import matplotlib.pyplot as plot

from numpy import *

data_size = array([x for x in xrange(10, 500, 10)])

test_size = 100000

cost_result = {}

for size in data_size:

test_data = [randint(x, size) for x in xrange(test_size)]

for data in create_data(size):

name, cost = test_find(test_data, data) #装饰器函数返回函数的执行时间

cost_result.setdefault(name, []).append(cost)

plot.figure(figsize=(10, 6))

xline = data_size

for data_type, result in cost_result.items():

yline = array(result)

plot.plot(xline, yline, label=data_type)

plot.ylabel('Time spend')

plot.xlabel('Find times')

plot.grid()

plot.legend()

plot.show()

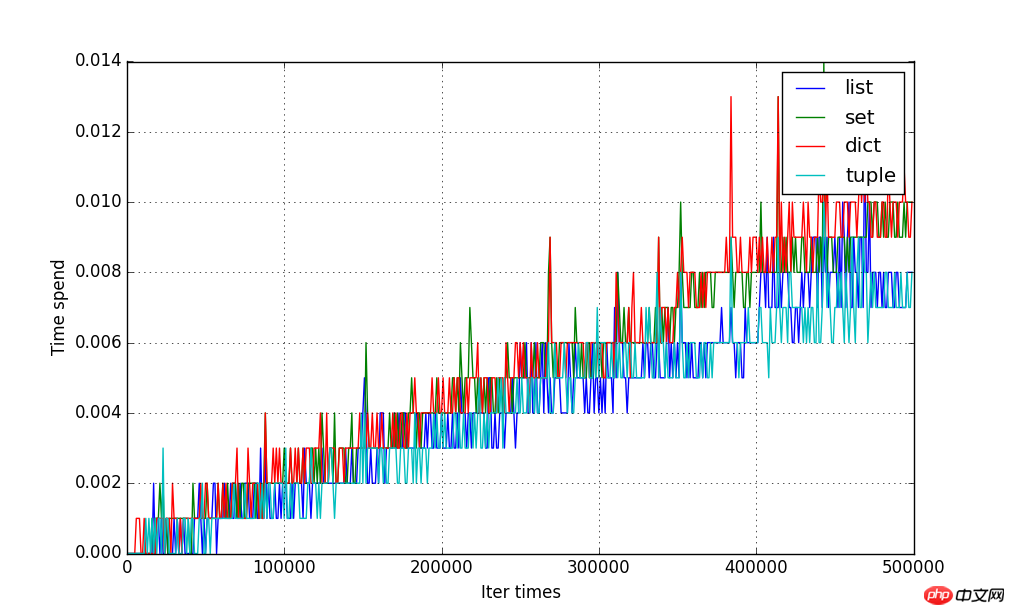

#迭代的時間,區別很微弱,dict、set要略微消耗時間多一點:

@cost_time

def test_iter(data):

for d in data:

pass

return data.__class__ .__name__

data_size = array([x for x in xrange(1, 500000, 1000)])

cost_result = {}

for size in data_size:

for data in create_data(size):

name, cost = test_iter(data)

cost_result.setdefault(name, []).append(cost)

#拟合曲线图

plot.figure(figsize=(10, 6))

xline = data_size

for data_type, result in cost_result.items():

yline = array(result)

plot.plot(xline, yline, label=data_type)

plot.ylabel('Time spend')

plot.xlabel('Iter times')

plot.grid()

plot.legend()

plot.show()

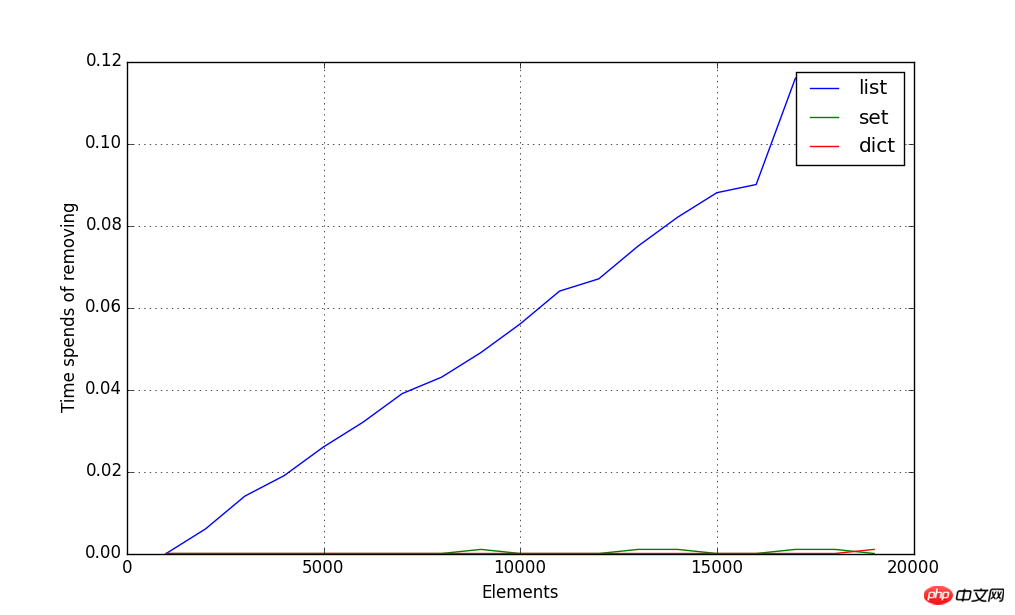

刪除元素消耗時間圖示如下,隨機刪除1000個元素,tuple類型不能刪除元素,所以不做比較:

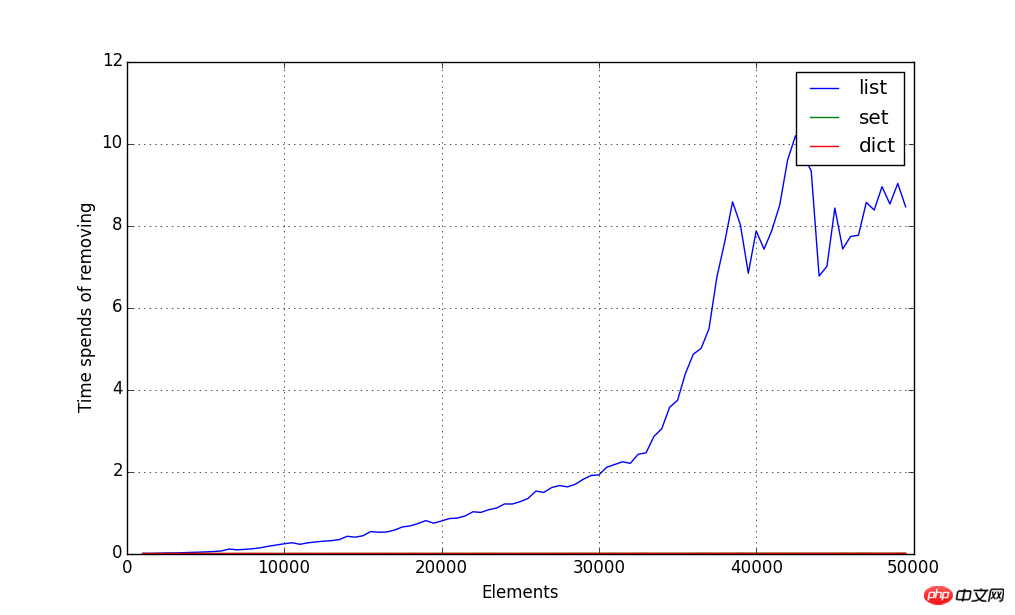

#隨機刪除一半的元素,圖形就以指數時間(O(n2))成長了:

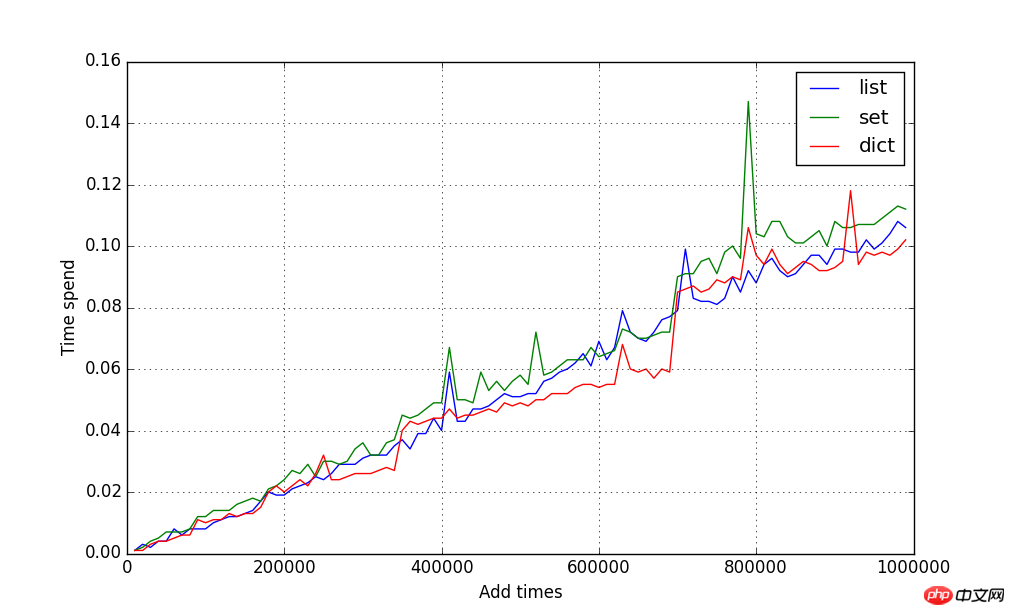

加入元素消耗的時間圖示如下,統計以10000為增量大小的元素個數的添加時間,都是線性增長時間,看不出有什麼差別,tuple類型不能添加新的元素,所以不做比較:

@cost_time

def test_dict_add(test_data, data):

for d in test_data:

data[d] = None

return data.__class__ .__name__

@cost_time

def test_set_add(test_data, data):

for d in test_data:

data.add(d)

return data.__class__ .__name__

@cost_time

def test_list_add(test_data, data):

for d in test_data:

data.append(d)

return data.__class__ .__name__

#初始化数据,指定每种类型对应它添加元素的方法

def init_data():

test_data = {

'list': (list(), test_list_add),

'set': (set(), test_set_add),

'dict': (dict(), test_dict_add)

}

return test_data

#每次检测10000增量大小的数据的添加时间

data_size = array([x for x in xrange(10000, 1000000, 10000)])

cost_result = {}

for size in data_size:

test_data = [x for x in xrange(size)]

for data_type, (data, add) in init_data().items():

name, cost = add(test_data, data) #返回方法的执行时间

cost_result.setdefault(data_type, []).append(cost)

plot.figure(figsize=(10, 6))

xline = data_size

for data_type, result in cost_result.items():

yline = array(result)

plot.plot(xline, yline, label=data_type)

plot.ylabel('Time spend')

plot.xlabel('Add times')

plot.grid()

plot.legend()

plot.show()

以上是Python集合類型(list tuple dict set generator)圖文詳解的詳細內容。更多資訊請關注PHP中文網其他相關文章!