介紹python 資料抓取三種方法

#免費學習推薦:python影片教學

三種資料抓取的方法

- 正則表達式(re庫)

- BeautifulSoup(bs4)

- lxml

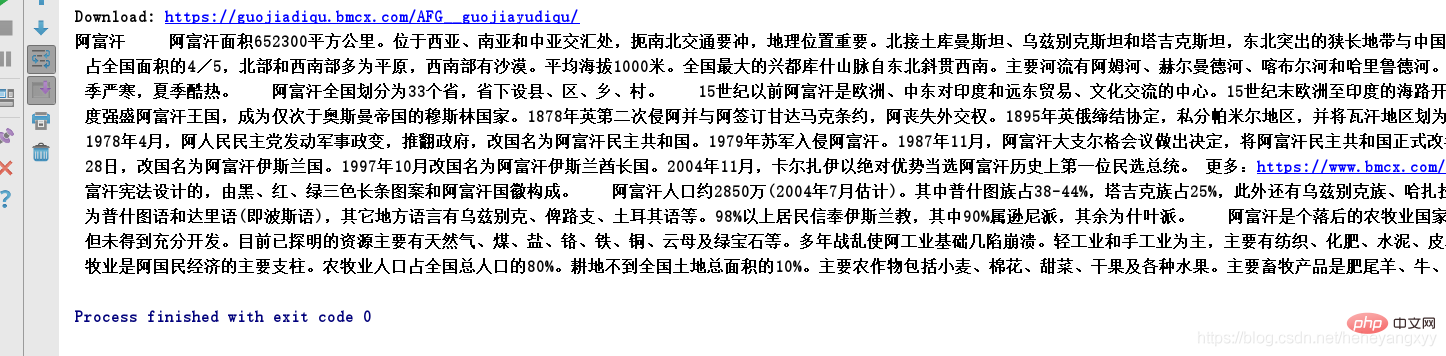

*利用先前建構的下載網頁函數,取得目標網頁的html,我們以https://guojiadiqu.bmcx.com/AFG__guojiayudiqu/為例,取得html。

from get_html import download url = 'https://guojiadiqu.bmcx.com/AFG__guojiayudiqu/'page_content = download(url)

*假設我們需要爬取該網頁中的國家名稱和概況,我們依序使用這三種資料抓取的方法來實現資料抓取。

1.正規表示式

from get_html import downloadimport re

url = 'https://guojiadiqu.bmcx.com/AFG__guojiayudiqu/'page_content = download(url)country = re.findall('class="h2dabiaoti">(.*?)', page_content) #注意返回的是listsurvey_data = re.findall('<tr><td>(.*?)</td></tr>', page_content)survey_info_list = re.findall('<p> (.*?)</p>', survey_data[0])survey_info = ''.join(survey_info_list)print(country[0],survey_info)2.BeautifulSoup(bs4)

from get_html import downloadfrom bs4 import BeautifulSoup

url = 'https://guojiadiqu.bmcx.com/AFG__guojiayudiqu/'html = download(url)#创建 beautifulsoup 对象soup = BeautifulSoup(html,"html.parser")#搜索country = soup.find(attrs={'class':'h2dabiaoti'}).text

survey_info = soup.find(attrs={'id':'wzneirong'}).textprint(country,survey_info)3.lxml

from get_html import downloadfrom lxml import etree #解析树url = 'https://guojiadiqu.bmcx.com/AFG__guojiayudiqu/'page_content = download(url)selector = etree.HTML(page_content)#可进行xpath解析country_select = selector.xpath('//*[@id="main_content"]/h2') #返回列表for country in country_select:

print(country.text)survey_select = selector.xpath('//*[@id="wzneirong"]/p')for survey_content in survey_select:

print(survey_content.text,end='')執行結果:

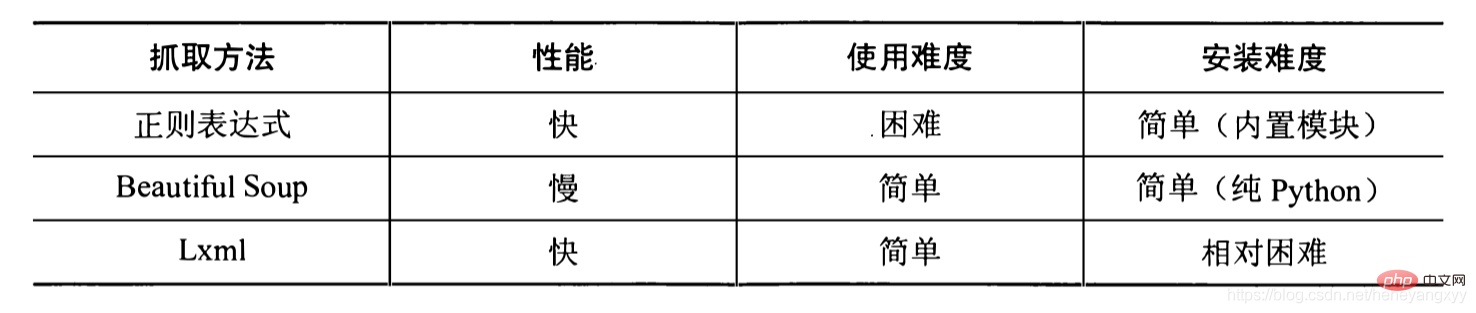

最後,引用《用python寫網路爬蟲》中三種方法的效能對比,如下圖:

僅供參考。

#相關免費學習推薦:python教學(影片)

以上是介紹python 資料抓取三種方法的詳細內容。更多資訊請關注PHP中文網其他相關文章!

熱AI工具

Undresser.AI Undress

人工智慧驅動的應用程序,用於創建逼真的裸體照片

AI Clothes Remover

用於從照片中去除衣服的線上人工智慧工具。

Undress AI Tool

免費脫衣圖片

Clothoff.io

AI脫衣器

Video Face Swap

使用我們完全免費的人工智慧換臉工具,輕鬆在任何影片中換臉!

熱門文章

熱工具

記事本++7.3.1

好用且免費的程式碼編輯器

SublimeText3漢化版

中文版,非常好用

禪工作室 13.0.1

強大的PHP整合開發環境

Dreamweaver CS6

視覺化網頁開發工具

SublimeText3 Mac版

神級程式碼編輯軟體(SublimeText3)

PHP和Python:解釋了不同的範例

Apr 18, 2025 am 12:26 AM

PHP和Python:解釋了不同的範例

Apr 18, 2025 am 12:26 AM

PHP主要是過程式編程,但也支持面向對象編程(OOP);Python支持多種範式,包括OOP、函數式和過程式編程。 PHP適合web開發,Python適用於多種應用,如數據分析和機器學習。

在PHP和Python之間進行選擇:指南

Apr 18, 2025 am 12:24 AM

在PHP和Python之間進行選擇:指南

Apr 18, 2025 am 12:24 AM

PHP適合網頁開發和快速原型開發,Python適用於數據科學和機器學習。 1.PHP用於動態網頁開發,語法簡單,適合快速開發。 2.Python語法簡潔,適用於多領域,庫生態系統強大。

Python vs. JavaScript:學習曲線和易用性

Apr 16, 2025 am 12:12 AM

Python vs. JavaScript:學習曲線和易用性

Apr 16, 2025 am 12:12 AM

Python更適合初學者,學習曲線平緩,語法簡潔;JavaScript適合前端開發,學習曲線較陡,語法靈活。 1.Python語法直觀,適用於數據科學和後端開發。 2.JavaScript靈活,廣泛用於前端和服務器端編程。

PHP和Python:深入了解他們的歷史

Apr 18, 2025 am 12:25 AM

PHP和Python:深入了解他們的歷史

Apr 18, 2025 am 12:25 AM

PHP起源於1994年,由RasmusLerdorf開發,最初用於跟踪網站訪問者,逐漸演變為服務器端腳本語言,廣泛應用於網頁開發。 Python由GuidovanRossum於1980年代末開發,1991年首次發布,強調代碼可讀性和簡潔性,適用於科學計算、數據分析等領域。

vs code 可以在 Windows 8 中運行嗎

Apr 15, 2025 pm 07:24 PM

vs code 可以在 Windows 8 中運行嗎

Apr 15, 2025 pm 07:24 PM

VS Code可以在Windows 8上運行,但體驗可能不佳。首先確保系統已更新到最新補丁,然後下載與系統架構匹配的VS Code安裝包,按照提示安裝。安裝後,注意某些擴展程序可能與Windows 8不兼容,需要尋找替代擴展或在虛擬機中使用更新的Windows系統。安裝必要的擴展,檢查是否正常工作。儘管VS Code在Windows 8上可行,但建議升級到更新的Windows系統以獲得更好的開發體驗和安全保障。

visual studio code 可以用於 python 嗎

Apr 15, 2025 pm 08:18 PM

visual studio code 可以用於 python 嗎

Apr 15, 2025 pm 08:18 PM

VS Code 可用於編寫 Python,並提供許多功能,使其成為開發 Python 應用程序的理想工具。它允許用戶:安裝 Python 擴展,以獲得代碼補全、語法高亮和調試等功能。使用調試器逐步跟踪代碼,查找和修復錯誤。集成 Git,進行版本控制。使用代碼格式化工具,保持代碼一致性。使用 Linting 工具,提前發現潛在問題。

vscode怎麼在終端運行程序

Apr 15, 2025 pm 06:42 PM

vscode怎麼在終端運行程序

Apr 15, 2025 pm 06:42 PM

在 VS Code 中,可以通過以下步驟在終端運行程序:準備代碼和打開集成終端確保代碼目錄與終端工作目錄一致根據編程語言選擇運行命令(如 Python 的 python your_file_name.py)檢查是否成功運行並解決錯誤利用調試器提升調試效率

vscode 擴展是否是惡意的

Apr 15, 2025 pm 07:57 PM

vscode 擴展是否是惡意的

Apr 15, 2025 pm 07:57 PM

VS Code 擴展存在惡意風險,例如隱藏惡意代碼、利用漏洞、偽裝成合法擴展。識別惡意擴展的方法包括:檢查發布者、閱讀評論、檢查代碼、謹慎安裝。安全措施還包括:安全意識、良好習慣、定期更新和殺毒軟件。