首次將「教導主任」引入模型蒸餾,大規模壓縮優於24種SOTA方法

面對越來越深的深度學習模型和大量的影片大數據,人工智慧演算法對運算資源的依賴越來越高。為了有效提升深度模型的性能和效率,透過探索模型的可蒸餾性和可稀疏性,本文提出了一種基於 “教導主任 - 教師 - 學生” 模式的統一的模型壓縮技術。

此成果由人民中科和中科院自動化所聯合研究團隊合作完成,相關論文發表在人工智慧頂級國際期刊IEEE Transactions on Pattern Analysis and Machine Intelligence (TPAMI) 上。此成果是首次將 “教導主任” 角色引入模型蒸餾技術,對深度模型的蒸餾與裁剪進行了統一。

#論文網址:https://ieeexplore.ieee.org/abstract/document/9804342

目前這項成果已應用於人民中科自主研發的跨模態智慧搜尋引擎「白澤」。 「白澤」 打破圖文音視等不同模態間訊息表達的隔閡,將文字、圖片、語音和視頻等不同模態訊息映射到一個統一特徵表示空間,以視頻為核心,學習多個模態間統一的距離測量,跨越文字、語音、視訊等多模態內容的語意鴻溝,實現大一統的搜尋能力。

然而面對海量的網路數據尤其是影片大數據,跨模態的深度模型對運算資源的消耗逐漸提升。基於此項研究成果,「白澤」能夠在保證演算法效能的情況下,將模型大小進行大規模壓縮,從而實現高通量低功耗的跨模態智慧理解和搜尋能力。根據初步的實際應用情況來看,此項技術能夠將大模型的參數規模壓縮平均四倍以上。一方面能夠大幅降低模型對 GPU 伺服器等高效能運算資源的消耗,另一方面能夠將無法在邊緣端部署的大模型經過蒸餾壓縮後實現邊緣端的低功耗部署。

模型壓縮的聯合學習框架

深度演算法模型的壓縮和加速可透過蒸餾學習或結構化稀疏裁剪實現,但這兩個領域均存在一些限制。對於蒸餾學習方法,旨在訓練一個輕量化模型(即學生網路)來模擬複雜龐大的模型(即教師網路)。在教師網絡的指導下,學生網絡可以獲得比單獨訓練的更優效能。

然而,蒸餾學習演算法只專注於提升學生網路的效能,往往忽略了網路結構的重要性。學生網絡的結構一般是預先定義好的,並且在訓練過程中是固定的。

對於結構化稀疏裁剪或濾波器裁剪,這些方法旨在將一個冗餘繁雜的網路裁剪成一個稀疏緊緻的網路。然而,模型裁剪僅用於獲得一個緊緻的結構。目前已有方法都沒有充分利用原始複雜模型所包含的「知識」。近期研究為了平衡模型性能和大小,將蒸餾學習和結構化稀疏裁剪進行結合。但是這些方法僅限於簡單的損失函數的結合。

為了深入分析以上問題,研究首先對模型進行基於壓縮感知訓練,透過分析模型性能和結構發現,對於深度演算法模型,存在兩個重要屬性:可蒸餾性(distillability)和可稀疏性(sparsability)。

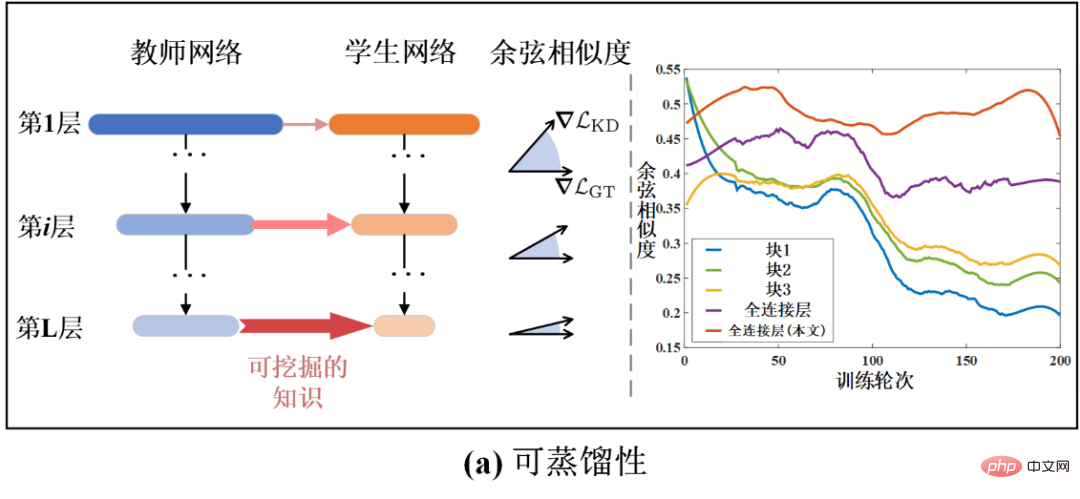

具體而言,可蒸餾性指的是能夠從教師網路中蒸餾出有效知識的密度。它可以透過學生網路在教師網路指導下所獲得的績效效益來衡量。例如,擁有更高可蒸餾性的學生網路可以獲得更高效能。可蒸餾性也可以在網路層層級上被定量分析。

As shown in Figure 1-(a), the bar graph represents the cosine similarity (Cosine Similarity) between the distillation learning loss gradient and the true value classification loss gradient. A larger cosine similarity indicates that the knowledge of the current distillation is more helpful for model performance. In this way, cosine similarity can also be a measure of distillability. It can be seen from Figure 1-(a) that the distillability gradually increases as the number of model layers becomes deeper. This also explains why supervision commonly used in distillation learning is applied in the last few layers of the model. Moreover, in different training rounds, the student model also has different distillability, because the cosine similarity also changes as the training time changes. Therefore, it is necessary to dynamically analyze the distillability of different layers during the training process.

On the other hand, sparsity refers to the cropping rate (or compression rate) that the model can obtain under limited accuracy loss. Higher sparsability corresponds to the potential for higher cropping rates. As shown in Figure 1-(b), different layers or modules of the network exhibit different sparsibility. Similar to distillability, sparsibility can also be analyzed at the network layer level and in the time dimension. However, there are currently no methods to explore and analyze distillability and rarefaction. Existing methods often use a fixed training mechanism, which makes it difficult to achieve an optimal result.

Figure 1 Schematic diagram of distillability and sparsity of deep neural networks

In order to solve the above problems, this study analyzes the training process of model compression to obtain relevant findings about distillability and sparsability. Inspired by these findings, this study proposes a model compression method based on joint learning of dynamic distillability and sparsity. It can dynamically combine distillation learning and structured sparse clipping, and adaptively adjust the joint training mechanism by learning distillability and sparsity.

Different from the conventional "Teacher-Student" framework, the method proposed in this article can be described as a "Learning-in-School" framework. Because it contains three major modules: teacher network, student network and dean network.

Specifically, the same as before, the teacher network teaches the student network. The teaching director network is responsible for controlling the intensity of students' online learning and the way they learn. By obtaining the status of the current teacher network and student network, the dean network can evaluate the distillability and sparsibility of the current student network, and then dynamically balance and control the strength of distillation learning supervision and structured sparse clipping supervision.

In order to optimize the method in this article, this research also proposes a joint optimization algorithm of distillation learning & tailoring based on the alternating direction multiplier method to update the student network. In order to optimize and update the teaching director network, this paper proposes a teaching director optimization algorithm based on meta-learning. Distillability can in turn be influenced by dynamically adjusting the supervision signal. As shown in Figure 1-(a), the method in this paper proves to be able to delay the downward trend of distillability and improve the overall distillability by rationally utilizing the knowledge of distillation.

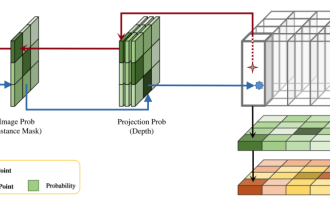

The overall algorithm framework and flow chart of this article’s method are shown in the figure below. The framework contains three major modules, teacher network, student network and dean network. Among them, the initial complex redundant network to be compressed and trimmed is regarded as the teacher network, and in the subsequent training process, the original network that is gradually sparse is regarded as the student network. The dean network is a meta-network that inputs the information of the teacher network and the student network to measure the current distillability and sparsity, thereby controlling the supervision intensity of distillation learning and sparseness.

In this way, at every moment, the student network can be guided and sparsified by dynamically distilled knowledge. For example, when the student network has a higher distillability, the dean will let a stronger distillation supervision signal guide the student network (see the pink arrow signal in Figure 2); on the contrary, when the student network has a higher sparseness Therefore, the dean will exert a stronger sparse supervision signal on the student network (see the orange arrow signal in Figure 2).

Figure 2 Schematic diagram of model compression algorithm based on joint learning of distillability and sparsity

Experimental results

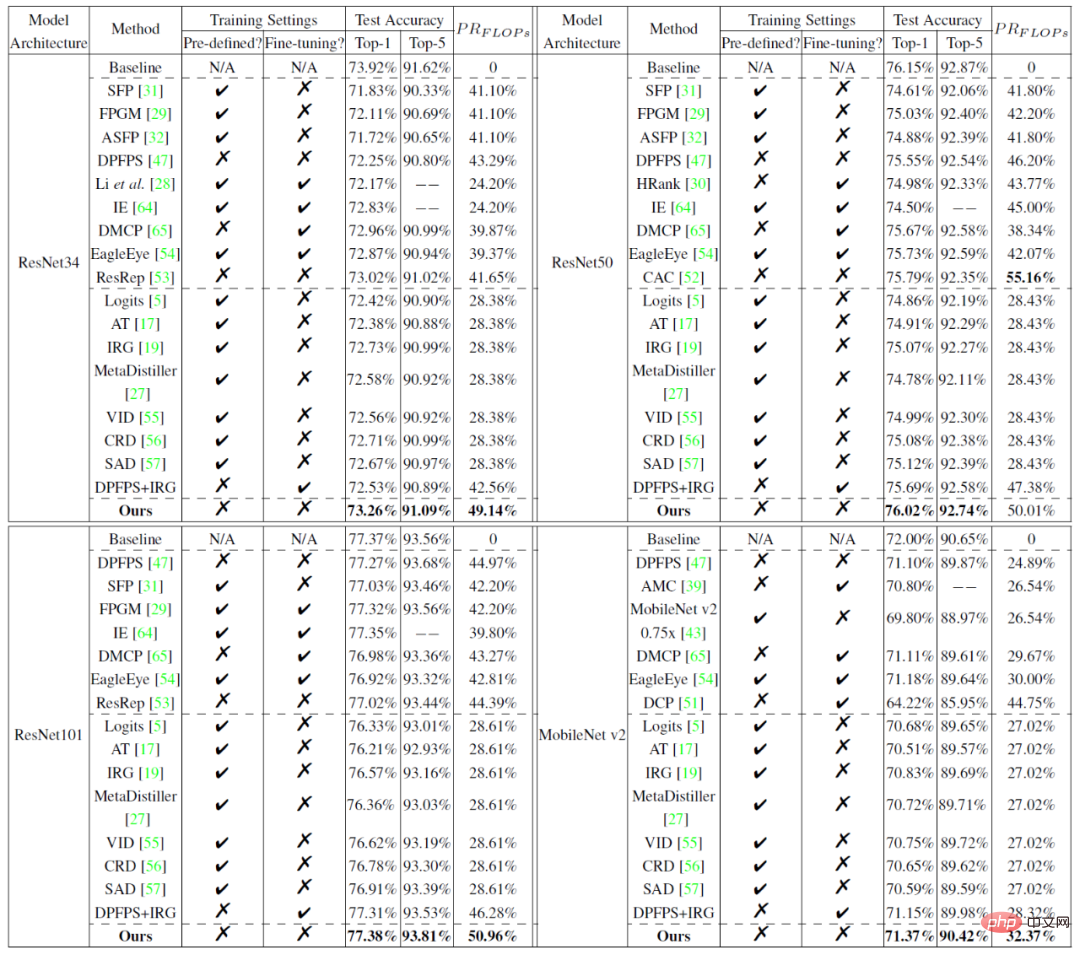

The experiment compares the method proposed in this article with 24 mainstream model compression methods (including sparse clipping methods and distillation learning methods) on the small-scale data set CIFAR and the large-scale data set ImageNet. The experimental results are shown in the figure below, which prove the superiority of the method proposed in this article.

Table 1 Performance comparison of model cropping results on CIFAR10:

Table 2 on ImageNet Performance comparison of model cropping results:

For more research details, please refer to the original paper.

以上是首次將「教導主任」引入模型蒸餾,大規模壓縮優於24種SOTA方法的詳細內容。更多資訊請關注PHP中文網其他相關文章!

熱AI工具

Undresser.AI Undress

人工智慧驅動的應用程序,用於創建逼真的裸體照片

AI Clothes Remover

用於從照片中去除衣服的線上人工智慧工具。

Undress AI Tool

免費脫衣圖片

Clothoff.io

AI脫衣器

AI Hentai Generator

免費產生 AI 無盡。

熱門文章

熱工具

記事本++7.3.1

好用且免費的程式碼編輯器

SublimeText3漢化版

中文版,非常好用

禪工作室 13.0.1

強大的PHP整合開發環境

Dreamweaver CS6

視覺化網頁開發工具

SublimeText3 Mac版

神級程式碼編輯軟體(SublimeText3)

熱門話題

全球最強開源 MoE 模型來了,中文能力比肩 GPT-4,價格僅 GPT-4-Turbo 的近百分之一

May 07, 2024 pm 04:13 PM

全球最強開源 MoE 模型來了,中文能力比肩 GPT-4,價格僅 GPT-4-Turbo 的近百分之一

May 07, 2024 pm 04:13 PM

想像一下,一個人工智慧模型,不僅擁有超越傳統運算的能力,還能以更低的成本實現更有效率的效能。這不是科幻,DeepSeek-V2[1],全球最強開源MoE模型來了。 DeepSeek-V2是一個強大的專家混合(MoE)語言模型,具有訓練經濟、推理高效的特點。它由236B個參數組成,其中21B個參數用於啟動每個標記。與DeepSeek67B相比,DeepSeek-V2效能更強,同時節省了42.5%的訓練成本,減少了93.3%的KV緩存,最大生成吞吐量提高到5.76倍。 DeepSeek是一家探索通用人工智

AI顛覆數學研究!菲爾茲獎得主、華裔數學家領銜11篇頂刊論文|陶哲軒轉贊

Apr 09, 2024 am 11:52 AM

AI顛覆數學研究!菲爾茲獎得主、華裔數學家領銜11篇頂刊論文|陶哲軒轉贊

Apr 09, 2024 am 11:52 AM

AI,的確正在改變數學。最近,一直十分關注這個議題的陶哲軒,轉發了最近一期的《美國數學學會通報》(BulletinoftheAmericanMathematicalSociety)。圍繞著「機器會改變數學嗎?」這個話題,許多數學家發表了自己的觀點,全程火花四射,內容硬核,精彩紛呈。作者陣容強大,包括菲爾茲獎得主AkshayVenkatesh、華裔數學家鄭樂雋、紐大電腦科學家ErnestDavis等多位業界知名學者。 AI的世界已經發生了天翻地覆的變化,要知道,其中許多文章是在一年前提交的,而在這一

你好,電動Atlas!波士頓動力機器人復活,180度詭異動作嚇到馬斯克

Apr 18, 2024 pm 07:58 PM

你好,電動Atlas!波士頓動力機器人復活,180度詭異動作嚇到馬斯克

Apr 18, 2024 pm 07:58 PM

波士頓動力Atlas,正式進入電動機器人時代!昨天,液壓Atlas剛「含淚」退出歷史舞台,今天波士頓動力就宣布:電動Atlas上崗。看來,在商用人形機器人領域,波士頓動力是下定決心要跟特斯拉硬剛一把了。新影片放出後,短短十幾小時內,就已經有一百多萬觀看。舊人離去,新角色登場,這是歷史的必然。毫無疑問,今年是人形機器人的爆發年。網友銳評:機器人的進步,讓今年看起來像人類的開幕式動作、自由度遠超人類,但這真不是恐怖片?影片一開始,Atlas平靜地躺在地上,看起來應該是仰面朝天。接下來,讓人驚掉下巴

替代MLP的KAN,被開源專案擴展到卷積了

Jun 01, 2024 pm 10:03 PM

替代MLP的KAN,被開源專案擴展到卷積了

Jun 01, 2024 pm 10:03 PM

本月初,來自MIT等機構的研究者提出了一種非常有潛力的MLP替代方法—KAN。 KAN在準確性和可解釋性方面表現優於MLP。而且它能以非常少的參數量勝過以更大參數量運行的MLP。例如,作者表示,他們用KAN以更小的網路和更高的自動化程度重現了DeepMind的結果。具體來說,DeepMind的MLP有大約300,000個參數,而KAN只有約200個參數。 KAN與MLP一樣具有強大的數學基礎,MLP基於通用逼近定理,而KAN基於Kolmogorov-Arnold表示定理。如下圖所示,KAN在邊上具

Google狂喜:JAX性能超越Pytorch、TensorFlow!或成GPU推理訓練最快選擇

Apr 01, 2024 pm 07:46 PM

Google狂喜:JAX性能超越Pytorch、TensorFlow!或成GPU推理訓練最快選擇

Apr 01, 2024 pm 07:46 PM

谷歌力推的JAX在最近的基準測試中表現已經超過Pytorch和TensorFlow,7項指標排名第一。而且測試並不是JAX性能表現最好的TPU上完成的。雖然現在在開發者中,Pytorch依然比Tensorflow更受歡迎。但未來,也許有更多的大型模型會基於JAX平台進行訓練和運行。模型最近,Keras團隊為三個後端(TensorFlow、JAX、PyTorch)與原生PyTorch實作以及搭配TensorFlow的Keras2進行了基準測試。首先,他們為生成式和非生成式人工智慧任務選擇了一組主流

FisheyeDetNet:首個以魚眼相機為基礎的目標偵測演算法

Apr 26, 2024 am 11:37 AM

FisheyeDetNet:首個以魚眼相機為基礎的目標偵測演算法

Apr 26, 2024 am 11:37 AM

目標偵測在自動駕駛系統當中是一個比較成熟的問題,其中行人偵測是最早得以部署演算法之一。在多數論文當中已經進行了非常全面的研究。然而,利用魚眼相機進行環視的距離感知相對來說研究較少。由於徑向畸變大,標準的邊界框表示在魚眼相機當中很難實施。為了緩解上述描述,我們探索了擴展邊界框、橢圓、通用多邊形設計為極座標/角度表示,並定義一個實例分割mIOU度量來分析這些表示。所提出的具有多邊形形狀的模型fisheyeDetNet優於其他模型,並同時在用於自動駕駛的Valeo魚眼相機資料集上實現了49.5%的mAP

特斯拉機器人進廠打工,馬斯克:手的自由度今年將達到22個!

May 06, 2024 pm 04:13 PM

特斯拉機器人進廠打工,馬斯克:手的自由度今年將達到22個!

May 06, 2024 pm 04:13 PM

特斯拉機器人Optimus最新影片出爐,已經可以在工廠裡打工了。正常速度下,它分揀電池(特斯拉的4680電池)是這樣的:官方還放出了20倍速下的樣子——在小小的「工位」上,揀啊揀啊揀:這次放出的影片亮點之一在於Optimus在廠子裡完成這項工作,是完全自主的,全程沒有人為的干預。而且在Optimus的視角之下,它還可以把放歪了的電池重新撿起來放置,主打一個自動糾錯:對於Optimus的手,英偉達科學家JimFan給出了高度的評價:Optimus的手是全球五指機器人裡最靈巧的之一。它的手不僅有觸覺

DualBEV:大幅超越BEVFormer、BEVDet4D,開卷!

Mar 21, 2024 pm 05:21 PM

DualBEV:大幅超越BEVFormer、BEVDet4D,開卷!

Mar 21, 2024 pm 05:21 PM

這篇論文探討了在自動駕駛中,從不同視角(如透視圖和鳥瞰圖)準確檢測物體的問題,特別是如何有效地從透視圖(PV)到鳥瞰圖(BEV)空間轉換特徵,這一轉換是透過視覺轉換(VT)模組實施的。現有的方法大致分為兩種策略:2D到3D和3D到2D轉換。 2D到3D的方法透過預測深度機率來提升密集的2D特徵,但深度預測的固有不確定性,尤其是在遠處區域,可能會引入不準確性。而3D到2D的方法通常使用3D查詢來採樣2D特徵,並透過Transformer學習3D和2D特徵之間對應關係的注意力權重,這增加了計算和部署的