如何使用Yolo V12进行对象检测?

YOLO (You Only Look Once) has been a leading real-time object detection framework, with each iteration improving upon the previous versions. The latest version YOLO v12 introduces advancements that significantly enhance accuracy while maintaining real-time processing speeds. This article explores the key innovations in YOLO v12, highlighting how it surpasses the previous versions while minimizing computational costs without compromising detection efficiency.

Table of contents

- What’s New in YOLO v12?

- Key Improvements Over Previous Versions

- Computational Efficiency Enhancements

- YOLO v12 Model Variants

- Let’s compare YOLO v11 and YOLO v12 Models

- Expert Opinions on YOLOv11 and YOLOv12

- Conclusion

What’s New in YOLO v12?

Previously, YOLO models relied on Convolutional Neural Networks (CNNs) for object detection due to their speed and efficiency. However, YOLO v12 makes use of attention mechanisms, a concept widely known and used in Transformer models which allow it to recognize patterns more effectively. While attention mechanisms have originally been slow for real-time object detection, YOLO v12 somehow successfully integrates them while maintaining YOLO’s speed, leading to an Attention-Centric YOLO framework.

Key Improvements Over Previous Versions

1. Attention-Centric Framework

YOLO v12 combines the power of attention mechanisms with CNNs, resulting in a model that is both faster and more accurate. Unlike its predecessors which relied solely on CNNs, YOLO v12 introduces optimized attention modules to improve object recognition without adding unnecessary latency.

2. Superior Performance Metrics

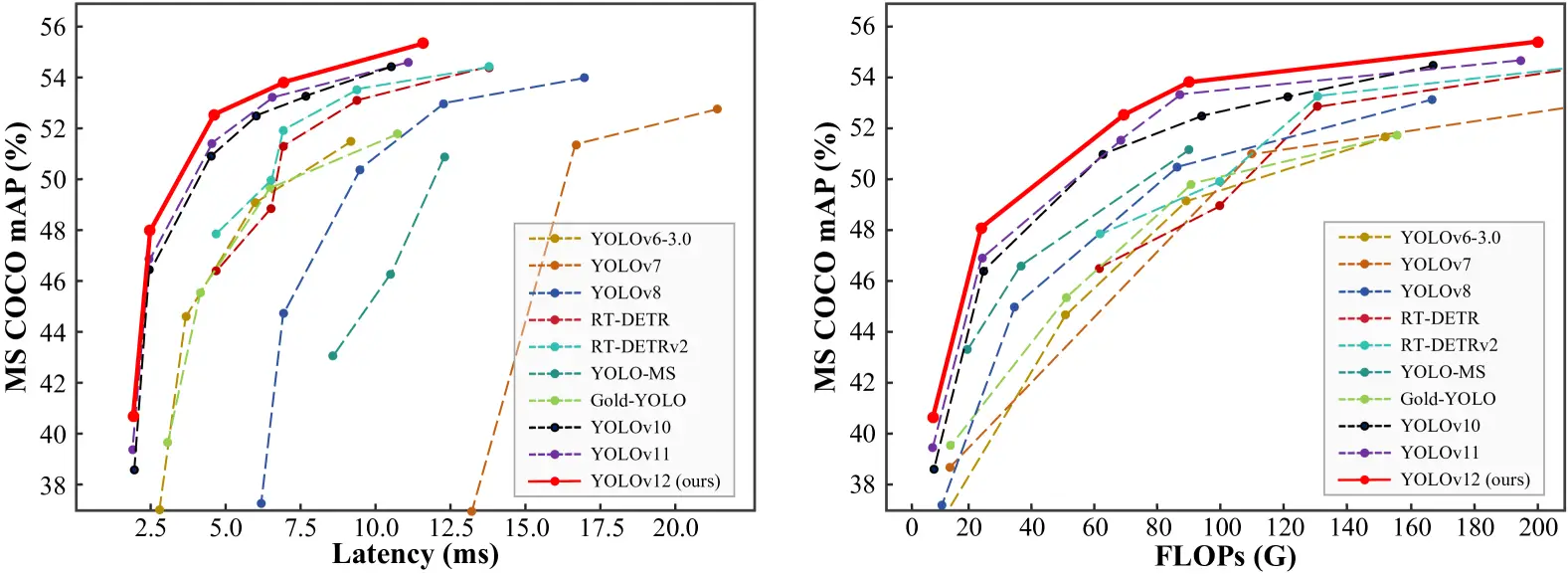

Comparing performance metrics across different YOLO versions and real-time detection models reveals that YOLO v12 achieves higher accuracy while maintaining low latency.

- The mAP (Mean Average Precision) values on datasets like COCO show YOLO v12 outperforming YOLO v11 and YOLO v10 while maintaining comparable speed.

- The model achieves a remarkable 40.6% accuracy (mAP) while processing images in just 1.64 milliseconds on an Nvidia T4 GPU. This performance is superior to YOLO v10 and YOLO v11 without sacrificing speed.

3. Outperforming Non-YOLO Models

YOLO v12 surpasses previous YOLO versions; it also outperforms other real-time object detection frameworks, such as RT-Det and RT-Det v2. These alternative models have higher latency yet fail to match YOLO v12’s accuracy.

Computational Efficiency Enhancements

One of the major concerns with integrating attention mechanisms into YOLO models was their high computational cost (Attention Mechanism) and memory inefficiency. YOLO v12 addresses these issues through several key innovations:

1. Flash Attention for Memory Efficiency

Traditional attention mechanisms consume a large amount of memory, making them impractical for real-time applications. YOLO v12 introduces Flash Attention, a technique that reduces memory consumption and speeds up inference time.

2. Area Attention for Lower Computation Cost

To further optimize efficiency, YOLO v12 employs Area Attention, which focuses only on relevant regions of an image instead of processing the entire feature map. This technique dramatically reduces computation costs while retaining accuracy.

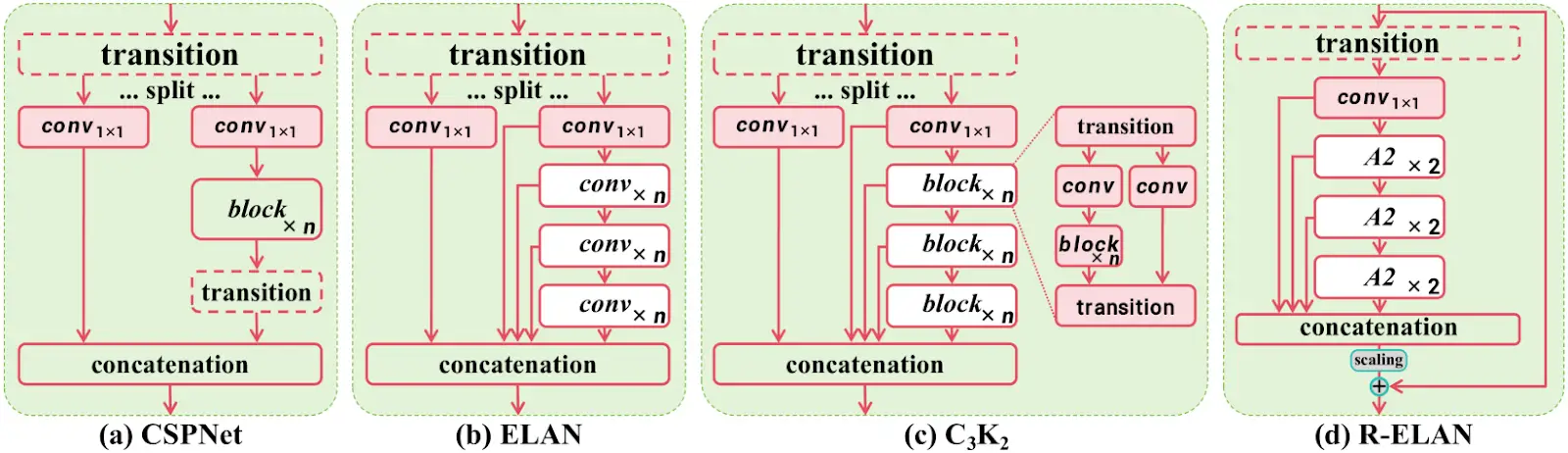

3. R-ELAN for Optimized Feature Processing

YOLO v12 also introduces R-ELAN (Re-Engineered ELAN), which optimizes feature propagation making the model more efficient in handling complex object detection tasks without increasing computational demands.

YOLO v12 Model Variants

YOLO v12 comes in five different variants, catering to different applications:

- N (Nano) & S (Small): Designed for real-time applications where speed is crucial.

- M (Medium): Balances accuracy and speed, suitable for general-purpose tasks.

- L (Large) & XL (Extra Large): Optimized for high-precision tasks where accuracy is prioritized over speed.

Also read:

- A Step-by-Step Introduction to the Basic Object Detection Algorithms (Part 1)

- A Practical Implementation of the Faster R-CNN Algorithm for Object Detection (Part 2)

- A Practical Guide to Object Detection using the Popular YOLO Framework – Part III (with Python codes)

Let’s compare YOLO v11 and YOLO v12 Models

We’ll be experimenting with YOLO v11 and YOLO v12 small models to understand their performance across various tasks like object counting, heatmaps, and speed estimation.

1. Object Counting

YOLO v11

import cv2

from ultralytics import solutions

cap = cv2.VideoCapture("highway.mp4")

assert cap.isOpened(), "Error reading video file"

w, h, fps = (int(cap.get(cv2.CAP_PROP_FRAME_WIDTH)), int(cap.get(cv2.CAP_PROP_FRAME_HEIGHT)), int(cap.get(cv2.CAP_PROP_FPS)))

# Define region points

region_points = [(20, 1500), (1080, 1500), (1080, 1460), (20, 1460)] # Lower rectangle region counting

# Video writer (MP4 format)

video_writer = cv2.VideoWriter("object_counting_output.mp4", cv2.VideoWriter_fourcc(*"mp4v"), fps, (w, h))

# Init ObjectCounter

counter = solutions.ObjectCounter(

show=False, # Disable internal window display

region=region_points,

model="yolo11s.pt",

)

# Process video

while cap.isOpened():

success, im0 = cap.read()

if not success:

print("Video frame is empty or video processing has been successfully completed.")

break

im0 = counter.count(im0)

# Resize to fit screen (optional — scale down for large videos)

im0_resized = cv2.resize(im0, (640, 360)) # Adjust resolution as needed

# Show the resized frame

cv2.imshow("Object Counting", im0_resized)

video_writer.write(im0)

# Press 'q' to exit

if cv2.waitKey(1) & 0xFF == ord('q'):

break

cap.release()

video_writer.release()

cv2.destroyAllWindows()Output

YOLO v12

import cv2

from ultralytics import solutions

cap = cv2.VideoCapture("highway.mp4")

assert cap.isOpened(), "Error reading video file"

w, h, fps = (int(cap.get(cv2.CAP_PROP_FRAME_WIDTH)), int(cap.get(cv2.CAP_PROP_FRAME_HEIGHT)), int(cap.get(cv2.CAP_PROP_FPS)))

# Define region points

region_points = [(20, 1500), (1080, 1500), (1080, 1460), (20, 1460)] # Lower rectangle region counting

# Video writer (MP4 format)

video_writer = cv2.VideoWriter("object_counting_output.mp4", cv2.VideoWriter_fourcc(*"mp4v"), fps, (w, h))

# Init ObjectCounter

counter = solutions.ObjectCounter(

show=False, # Disable internal window display

region=region_points,

model="yolo12s.pt",

)

# Process video

while cap.isOpened():

success, im0 = cap.read()

if not success:

print("Video frame is empty or video processing has been successfully completed.")

break

im0 = counter.count(im0)

# Resize to fit screen (optional — scale down for large videos)

im0_resized = cv2.resize(im0, (640, 360)) # Adjust resolution as needed

# Show the resized frame

cv2.imshow("Object Counting", im0_resized)

video_writer.write(im0)

# Press 'q' to exit

if cv2.waitKey(1) & 0xFF == ord('q'):

break

cap.release()

video_writer.release()

cv2.destroyAllWindows()Output

2. Heatmaps

YOLO v11

import cv2

from ultralytics import solutions

cap = cv2.VideoCapture("mall_arial.mp4")

assert cap.isOpened(), "Error reading video file"

w, h, fps = (int(cap.get(x)) for x in (cv2.CAP_PROP_FRAME_WIDTH, cv2.CAP_PROP_FRAME_HEIGHT, cv2.CAP_PROP_FPS))

# Video writer

video_writer = cv2.VideoWriter("heatmap_output_yolov11.mp4", cv2.VideoWriter_fourcc(*"mp4v"), fps, (w, h))

# In case you want to apply object counting + heatmaps, you can pass region points.

# region_points = [(20, 400), (1080, 400)] # Define line points

# region_points = [(20, 400), (1080, 400), (1080, 360), (20, 360)] # Define region points

# region_points = [(20, 400), (1080, 400), (1080, 360), (20, 360), (20, 400)] # Define polygon points

# Init heatmap

heatmap = solutions.Heatmap(

show=True, # Display the output

model="yolo11s.pt", # Path to the YOLO11 model file

colormap=cv2.COLORMAP_PARULA, # Colormap of heatmap

# region=region_points, # If you want to do object counting with heatmaps, you can pass region_points

# classes=[0, 2], # If you want to generate heatmap for specific classes i.e person and car.

# show_in=True, # Display in counts

# show_out=True, # Display out counts

# line_width=2, # Adjust the line width for bounding boxes and text display

)

# Process video

while cap.isOpened():

success, im0 = cap.read()

if not success:

print("Video frame is empty or video processing has been successfully completed.")

break

im0 = heatmap.generate_heatmap(im0)

im0_resized = cv2.resize(im0, (w, h))

video_writer.write(im0_resized)

cap.release()

video_writer.release()

cv2.destroyAllWindows()Output

YOLO v12

import cv2

from ultralytics import solutions

cap = cv2.VideoCapture("mall_arial.mp4")

assert cap.isOpened(), "Error reading video file"

w, h, fps = (int(cap.get(x)) for x in (cv2.CAP_PROP_FRAME_WIDTH, cv2.CAP_PROP_FRAME_HEIGHT, cv2.CAP_PROP_FPS))

# Video writer

video_writer = cv2.VideoWriter("heatmap_output_yolov12.mp4", cv2.VideoWriter_fourcc(*"mp4v"), fps, (w, h))

# In case you want to apply object counting + heatmaps, you can pass region points.

# region_points = [(20, 400), (1080, 400)] # Define line points

# region_points = [(20, 400), (1080, 400), (1080, 360), (20, 360)] # Define region points

# region_points = [(20, 400), (1080, 400), (1080, 360), (20, 360), (20, 400)] # Define polygon points

# Init heatmap

heatmap = solutions.Heatmap(

show=True, # Display the output

model="yolo12s.pt", # Path to the YOLO11 model file

colormap=cv2.COLORMAP_PARULA, # Colormap of heatmap

# region=region_points, # If you want to do object counting with heatmaps, you can pass region_points

# classes=[0, 2], # If you want to generate heatmap for specific classes i.e person and car.

# show_in=True, # Display in counts

# show_out=True, # Display out counts

# line_width=2, # Adjust the line width for bounding boxes and text display

)

# Process video

while cap.isOpened():

success, im0 = cap.read()

if not success:

print("Video frame is empty or video processing has been successfully completed.")

break

im0 = heatmap.generate_heatmap(im0)

im0_resized = cv2.resize(im0, (w, h))

video_writer.write(im0_resized)

cap.release()

video_writer.release()

cv2.destroyAllWindows()Output

3. Speed Estimation

YOLO v11

import cv2

from ultralytics import solutions

import numpy as np

cap = cv2.VideoCapture("cars_on_road.mp4")

assert cap.isOpened(), "Error reading video file"

# Capture video properties

w = int(cap.get(cv2.CAP_PROP_FRAME_WIDTH))

h = int(cap.get(cv2.CAP_PROP_FRAME_HEIGHT))

fps = int(cap.get(cv2.CAP_PROP_FPS))

# Video writer

video_writer = cv2.VideoWriter("speed_management_yolov11.mp4", cv2.VideoWriter_fourcc(*"mp4v"), fps, (w, h))

# Define speed region points (adjust for your video resolution)

speed_region = [(300, h - 200), (w - 100, h - 200), (w - 100, h - 270), (300, h - 270)]

# Initialize SpeedEstimator

speed = solutions.SpeedEstimator(

show=False, # Disable internal window display

model="yolo11s.pt", # Path to the YOLO model file

region=speed_region, # Pass region points

# classes=[0, 2], # Optional: Filter specific object classes (e.g., cars, trucks)

# line_width=2, # Optional: Adjust the line width

)

# Process video

while cap.isOpened():

success, im0 = cap.read()

if not success:

print("Video frame is empty or video processing has been successfully completed.")

break

# Estimate speed and draw bounding boxes

out = speed.estimate_speed(im0)

# Draw the speed region on the frame

cv2.polylines(out, [np.array(speed_region)], isClosed=True, color=(0, 255, 0), thickness=2)

# Resize the frame to fit the screen

im0_resized = cv2.resize(out, (1280, 720)) # Resize for better screen fit

# Show the resized frame

cv2.imshow("Speed Estimation", im0_resized)

video_writer.write(out)

# Press 'q' to exit

if cv2.waitKey(1) & 0xFF == ord('q'):

break

cap.release()

video_writer.release()

cv2.destroyAllWindows()Output

YOLO v12

import cv2

from ultralytics import solutions

import numpy as np

cap = cv2.VideoCapture("cars_on_road.mp4")

assert cap.isOpened(), "Error reading video file"

# Capture video properties

w = int(cap.get(cv2.CAP_PROP_FRAME_WIDTH))

h = int(cap.get(cv2.CAP_PROP_FRAME_HEIGHT))

fps = int(cap.get(cv2.CAP_PROP_FPS))

# Video writer

video_writer = cv2.VideoWriter("speed_management_yolov12.mp4", cv2.VideoWriter_fourcc(*"mp4v"), fps, (w, h))

# Define speed region points (adjust for your video resolution)

speed_region = [(300, h - 200), (w - 100, h - 200), (w - 100, h - 270), (300, h - 270)]

# Initialize SpeedEstimator

speed = solutions.SpeedEstimator(

show=False, # Disable internal window display

model="yolo12s.pt", # Path to the YOLO model file

region=speed_region, # Pass region points

# classes=[0, 2], # Optional: Filter specific object classes (e.g., cars, trucks)

# line_width=2, # Optional: Adjust the line width

)

# Process video

while cap.isOpened():

success, im0 = cap.read()

if not success:

print("Video frame is empty or video processing has been successfully completed.")

break

# Estimate speed and draw bounding boxes

out = speed.estimate_speed(im0)

# Draw the speed region on the frame

cv2.polylines(out, [np.array(speed_region)], isClosed=True, color=(0, 255, 0), thickness=2)

# Resize the frame to fit the screen

im0_resized = cv2.resize(out, (1280, 720)) # Resize for better screen fit

# Show the resized frame

cv2.imshow("Speed Estimation", im0_resized)

video_writer.write(out)

# Press 'q' to exit

if cv2.waitKey(1) & 0xFF == ord('q'):

break

cap.release()

video_writer.release()

cv2.destroyAllWindows()Output

Also Read: Top 30+ Computer Vision Models For 2025

Expert Opinions on YOLOv11 and YOLOv12

Muhammad Rizwan Munawar — Computer Vision Engineer at Ultralytics

“YOLOv12 introduces flash attention, which enhances accuracy, but it requires careful CUDA setup. It’s a solid step forward, especially for complex detection tasks, though YOLOv11 remains faster for real-time needs. In short, choose YOLOv12 for accuracy and YOLOv11 for speed.”

Linkedin Post – Is YOLOv12 really a state-of-the-art model? ?

Muhammad Rizwan, recently tested YOLOv11 and YOLOv12 side by side to break down their real-world performance. His findings highlight the trade-offs between the two models:

- Frames Per Second (FPS): YOLOv11 maintains an average of 40 FPS, while YOLOv12 lags behind at 30 FPS. This makes YOLOv11 the better choice for real-time applications where speed is critical, such as traffic monitoring or live video feeds.

- Training Time: YOLOv12 takes about 20% longer to train than YOLOv11. On a small dataset with 130 training images and 43 validation images, YOLOv11 completed training in 0.009 hours, while YOLOv12 needed 0.011 hours. While this might seem minor for small datasets, the difference becomes significant for larger-scale projects.

- Accuracy: Both models achieved similar accuracy after fine-tuning for 10 epochs on the same dataset. YOLOv12 didn’t dramatically outperform YOLOv11 in terms of accuracy, suggesting the newer model’s improvements lie more in architectural enhancements than raw detection precision.

- Flash Attention: YOLOv12 introduces flash attention, a powerful mechanism that speeds up and optimizes attention layers. However, there’s a catch — this feature isn’t natively supported on the CPU, and enabling it with CUDA requires careful version-specific setup. For teams without powerful GPUs or those working on edge devices, this can become a roadblock.

The PC specifications used for testing:

- GPU: NVIDIA RTX 3050

- CPU: Intel Core-i5-10400 @2.90GHz

- RAM: 64 GB

The model specifications:

- Model = YOLO11n.pt and YOLOv12n.pt

- Image size = 640 for inference

Conclusion

YOLO v12 marks a significant leap forward in real-time object detection, combining CNN speed with Transformer-like attention mechanisms. With improved accuracy, lower computational costs, and a range of model variants, YOLO v12 is poised to redefine the landscape of real-time vision applications. Whether for autonomous vehicles, security surveillance, or medical imaging, YOLO v12 sets a new standard for real-time object detection efficiency.

What’s Next?

- YOLO v13 Possibilities: Will future versions push the attention mechanisms even further?

- Edge Device Optimization: Can Flash Attention or Area Attention be optimized for lower-power devices?

To help you better understand the differences, I’ve attached some code snippets and output results in the comparison section. These examples illustrate how both YOLOv11 and YOLOv12 perform in real-world scenarios, from object counting to speed estimation and heatmaps. I’m excited to see how you guys perceive this new release! Are the improvements in accuracy and attention mechanisms enough to justify the trade-offs in speed? Or do you think YOLOv11 still holds its ground for most applications?

以上是如何使用Yolo V12进行对象检测?的详细内容。更多信息请关注PHP中文网其他相关文章!

热AI工具

Undresser.AI Undress

人工智能驱动的应用程序,用于创建逼真的裸体照片

AI Clothes Remover

用于从照片中去除衣服的在线人工智能工具。

Undress AI Tool

免费脱衣服图片

Clothoff.io

AI脱衣机

Video Face Swap

使用我们完全免费的人工智能换脸工具轻松在任何视频中换脸!

热门文章

热工具

记事本++7.3.1

好用且免费的代码编辑器

SublimeText3汉化版

中文版,非常好用

禅工作室 13.0.1

功能强大的PHP集成开发环境

Dreamweaver CS6

视觉化网页开发工具

SublimeText3 Mac版

神级代码编辑软件(SublimeText3)

开始使用Meta Llama 3.2 -Analytics Vidhya

Apr 11, 2025 pm 12:04 PM

开始使用Meta Llama 3.2 -Analytics Vidhya

Apr 11, 2025 pm 12:04 PM

Meta的Llama 3.2:多模式和移动AI的飞跃 Meta最近公布了Llama 3.2,这是AI的重大进步,具有强大的视觉功能和针对移动设备优化的轻量级文本模型。 以成功为基础

10个生成AI编码扩展,在VS代码中,您必须探索

Apr 13, 2025 am 01:14 AM

10个生成AI编码扩展,在VS代码中,您必须探索

Apr 13, 2025 am 01:14 AM

嘿,编码忍者!您当天计划哪些与编码有关的任务?在您进一步研究此博客之前,我希望您考虑所有与编码相关的困境,这是将其列出的。 完毕? - 让&#8217

AV字节:Meta' llama 3.2,Google的双子座1.5等

Apr 11, 2025 pm 12:01 PM

AV字节:Meta' llama 3.2,Google的双子座1.5等

Apr 11, 2025 pm 12:01 PM

本周的AI景观:进步,道德考虑和监管辩论的旋风。 OpenAI,Google,Meta和Microsoft等主要参与者已经释放了一系列更新,从开创性的新车型到LE的关键转变

向员工出售AI策略:Shopify首席执行官的宣言

Apr 10, 2025 am 11:19 AM

向员工出售AI策略:Shopify首席执行官的宣言

Apr 10, 2025 am 11:19 AM

Shopify首席执行官TobiLütke最近的备忘录大胆地宣布AI对每位员工的基本期望是公司内部的重大文化转变。 这不是短暂的趋势。这是整合到P中的新操作范式

GPT-4O vs OpenAI O1:新的Openai模型值得炒作吗?

Apr 13, 2025 am 10:18 AM

GPT-4O vs OpenAI O1:新的Openai模型值得炒作吗?

Apr 13, 2025 am 10:18 AM

介绍 Openai已根据备受期待的“草莓”建筑发布了其新模型。这种称为O1的创新模型增强了推理能力,使其可以通过问题进行思考

视觉语言模型(VLMS)的综合指南

Apr 12, 2025 am 11:58 AM

视觉语言模型(VLMS)的综合指南

Apr 12, 2025 am 11:58 AM

介绍 想象一下,穿过美术馆,周围是生动的绘画和雕塑。现在,如果您可以向每一部分提出一个问题并获得有意义的答案,该怎么办?您可能会问:“您在讲什么故事?

如何在SQL中添加列? - 分析Vidhya

Apr 17, 2025 am 11:43 AM

如何在SQL中添加列? - 分析Vidhya

Apr 17, 2025 am 11:43 AM

SQL的Alter表语句:动态地将列添加到数据库 在数据管理中,SQL的适应性至关重要。 需要即时调整数据库结构吗? Alter表语句是您的解决方案。本指南的详细信息添加了Colu

阅读AI索引2025:AI是您的朋友,敌人还是副驾驶?

Apr 11, 2025 pm 12:13 PM

阅读AI索引2025:AI是您的朋友,敌人还是副驾驶?

Apr 11, 2025 pm 12:13 PM

斯坦福大学以人为本人工智能研究所发布的《2025年人工智能指数报告》对正在进行的人工智能革命进行了很好的概述。让我们用四个简单的概念来解读它:认知(了解正在发生的事情)、欣赏(看到好处)、接纳(面对挑战)和责任(弄清我们的责任)。 认知:人工智能无处不在,并且发展迅速 我们需要敏锐地意识到人工智能发展和传播的速度有多快。人工智能系统正在不断改进,在数学和复杂思维测试中取得了优异的成绩,而就在一年前,它们还在这些测试中惨败。想象一下,人工智能解决复杂的编码问题或研究生水平的科学问题——自2023年