本篇文章的主要主要讲述的是对PHP导出的海量数据进行优化,具有一定的参考价值,有需要的朋友可以看看。

导出数据量很大的情况下,生成excel的内存需求非常庞大,服务器吃不消,这个时候考虑生成csv来解决问题,cvs读写性能比excel高。

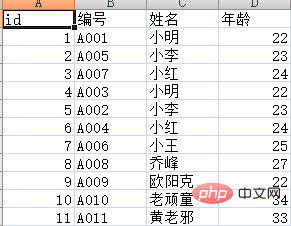

测试表student 数据(大家可以脚本插入300多万测数据。这里只给个简单的示例了)

SET NAMES utf8mb4; SET FOREIGN_KEY_CHECKS = 0; -- ---------------------------- -- Table structure for student -- ---------------------------- DROP TABLE IF EXISTS `student`; CREATE TABLE `student` ( `ID` int(11) NOT NULL AUTO_INCREMENT, `StuNo` varchar(32) CHARACTER SET utf8 COLLATE utf8_general_ci NOT NULL, `StuName` varchar(10) CHARACTER SET utf8 COLLATE utf8_general_ci NOT NULL, `StuAge` int(11) NULL DEFAULT NULL, PRIMARY KEY (`ID`) USING BTREE ) ENGINE = InnoDB AUTO_INCREMENT = 12 CHARACTER SET = utf8 COLLATE = utf8_general_ci ROW_FORMAT = Compact; -- ---------------------------- -- Records of student -- ---------------------------- INSERT INTO `student` VALUES (1, 'A001', '小明', 22); INSERT INTO `student` VALUES (2, 'A005', '小李', 23); INSERT INTO `student` VALUES (3, 'A007', '小红', 24); INSERT INTO `student` VALUES (4, 'A003', '小明', 22); INSERT INTO `student` VALUES (5, 'A002', '小李', 23); INSERT INTO `student` VALUES (6, 'A004', '小红', 24); INSERT INTO `student` VALUES (7, 'A006', '小王', 25); INSERT INTO `student` VALUES (8, 'A008', '乔峰', 27); INSERT INTO `student` VALUES (9, 'A009', '欧阳克', 22); INSERT INTO `student` VALUES (10, 'A010', '老顽童', 34); INSERT INTO `student` VALUES (11, 'A011', '黄老邪', 33); SET FOREIGN_KEY_CHECKS = 1;

导出脚本export.php

<?php

set_time_limit(0);

ini_set('memory_limit', '128M');

$fileName = date('YmdHis', time());

header('Content-Encoding: UTF-8');

header("Content-type:application/vnd.ms-excel;charset=UTF-8");

header('Content-Disposition: attachment;filename="' . $fileName . '.csv"');

//注意,数据量在大的情况下。比如导出几十万到几百万,会出现504 Gateway Time-out,请修改php.ini的max_execution_time参数

//打开php标准输出流以写入追加的方式打开

$fp = fopen('php://output', 'a');

//连接数据库

$dbhost = '127.0.0.1';

$dbuser = 'root';

$dbpwd = 'root';

$con = mysqli_connect($dbhost, $dbuser, $dbpwd);

if (mysqli_connect_errno())

die('connect error');

$database = 'test';//选择数据库

mysqli_select_db($con, $database);

mysqli_query($con, "set names UTF8");//如果需要请设置编码

//用fputcsv从数据库中导出1百万的数据,比如我们每次取1万条数据,分100步来执行

//一次性读取1万条数据,也可以把$nums调小,$step相应增大。

$step = 100;

$nums = 10000;

$where = "where 1=1"; //筛选条件,可自行添加

//设置标题

$title = array('id', '编号', '姓名', '年龄'); //注意这里是小写id,否则ID命名打开会提示Excel 已经检测到"xxx.xsl"是SYLK文件,但是不能将其加载: CSV 文或者XLS文件的前两个字符是大写字母"I","D"时,会发生此问题。

foreach ($title as $key => $item)

$title[$key] = iconv("UTF-8", "GB2312//IGNORE", $item);

fputcsv($fp, $title);

for ($s = 1; $s <= $step; $s++) {

$start = ($s - 1) * $nums;

$result = mysqli_query($con, "SELECT ID,StuNo,StuName,StuAge FROM `student` " . $where . " ORDER BY `ID` LIMIT {$start},{$nums}");

if ($result) {

while ($row = mysqli_fetch_assoc($result)) {

foreach ($row as $key => $item)

$row[$key] = iconv("UTF-8", "GBK", $item); //这里必须转码,不然会乱码

fputcsv($fp, $row);

}

mysqli_free_result($result); //释放结果集资源

ob_flush(); //每1万条数据就刷新缓冲区

flush();

}

}

mysqli_close($con);//断开连接导出效果:

相关教程:PHP视频教程

以上是如何对PHP导出的海量数据进行优化的详细内容。更多信息请关注PHP中文网其他相关文章!